Why no one visits your new site

Your website is online, the product details page to do more than two dozen, the company's introduction is written clearly, and even update the public number of articles every day - but the search for the brand name are ranked on the third page, the natural flow of dozens of people every month. At this time you are likely to suspect: is the content is not good enough? Do you want to put more ads?

Stop. Don't rush to buy traffic or post ten more softwares just yet.

The traffic woes of most new sites are not a content problem at all, but a failure of the underlying structure. Search engines (Baidu, Google) will not be like a curious visitor to actively find your site. They rely on reptilian programs (spiders) to crawl along the links, and if you don't have the door open and the signposts plugged in, the spiders simply can't find the entrance, let alone include your page.

Many people understand SEO as “more articles, more keywords“, which is a dangerous misunderstanding. SEO is not a cheating tool, nor is it a keyword stacking contest, but a communication tool to prove the value of the site to the search engine. The essence is to help the search engine to understand: when the user searches for a certain problem, your website can provide more professional, more complete answers than peers. If you even “be found“ this basic action is not completed, after all the content optimization is a waste of effort.

There are three issues that must be addressed in order for a new site to start SEO:discovered(so that spiders can crawl),recognized(Getting content into the indexed library),recommended(Getting keywords to rank). One step short of these three steps and the traffic breaks off a layer.

The most important thing you should do now is not to rush to write the next tweet, but to check: the site map is submitted? robots.txt has not been mistaken? URL structure is not the spider can crawl to understand? These technical foundations are not solid, the more content you send, the more wasteful it is.

Next, let's address the issue of “being found“ - so that search engines can actually find your site.

Step 1: Nail the crawl so search engines can find you

Having made it clear that technical fundamentals are at the root of your traffic woes, the first thing you need to do now is not to write content, but to show the search engines the way.

Create the XML sitemap first

Search engine spiders need a “list of sites” to find your page. This list is the XML sitemap. What you have to do is very simple: use SEO plug-ins (such as Yoast SEO, Rank Math, or Baidu's sitemap generation tool) to generate an XML file, the home page, category pages, all the articles/products pages are listed.

Once generated, do two separate things:

- Open the Google Search Console and submit this XML sitemap

- Open the Baidu search resource platform and submit this XML sitemap.

The cost of this step is zero, the gain is that the spider does not have to “guess” what pages you have, but “according to the map” directly crawl. Most of the new site on the line for several months did not submit a site map, the spider can only follow the home page sporadic several links to climb, can catch the page less than one-tenth of the actual stock.

Re-checking the robots.txt file

This is the most common low-level mistake new sites make - accidentally blocking an entire site. You go to your browser and type 你的域名/robots.txt, look over the contents of the file. If you see something written like this:

User-agent: *

Disallow: /

Hurry up and change.Disallow: / Meaning that banning all search engines from crawling is the same as you locking the door.

The correct way to write this is to allow crawling of the main directory, and only block pages that don't need to be indexed (e.g. backend login pages, search results pages). If you're not sure how to write this, the easiest option is to leave only two lines:

User-agent: *

Allow: /

URL hierarchy is limited to three layers

Spiders crawl along the URL, and the shallower the hierarchy, the higher the weight.

- Good URL:

example.com/seo-jiqiao/10-fangfa(Three layers: domain/category/content) - Poor URL:

example.com/category/2023/06/post-title(more than five layers, too deep and weights are diluted)

You can open up a couple pages of your own website and take a look at the address bar. If it's /a/b/c/d/... Such an endless path, you need to do URL static processing. Most CMSes have a “permalink” setting in the backend to change the URL to something like /分类名/文章名 The concise form of the

The site is structured in a tree structure

Think of your website as a tree: the root is the homepage, the trunk is the channel/category pages, and the leaves are specific content pages.

Users start from the home page and can reach any important page in up to three clicks. Spiders are also the same reason. The benefits of tree structure is clear hierarchy, easy to expand, you add ten new columns will not destroy the original crawl path.

Specific operations: check whether the navigation bar covers the main categories, whether there is a list page under each category, and whether the list page is linked to a specific content page. If a certain category can not find the entrance in the navigation, the pages in this category will almost not be crawled by spiders.

Interlinking related pages through internal links

Spiders follow links to jump from one page to another. If one of your articles mentions another topic, it's a good idea to insert a hyperlink in the body text pointing to that article's page. This is internal linking.

Another common mistake made by newbies is that each article is an island with no internal links pointing to other articles. Spiders leave after crawling an article and have no reason to go back. The solution is simple: for every new article you publish, add at least 2-3 links to the new article in the old one.

A simple way is to add “related recommendations” module at the bottom of the old articles, each article can become the entrance to other articles. Over time, the whole station to form an interconnected network, spiders will repeatedly visit, crawling efficiency doubled.

After these five things are done, can search engines find your website. Can. But just because it's found doesn't mean it will be included - that's the next step you'll have to address.

Step 2: Nail the indexing and get the content into the search engine candidate pools

Spiders have followed the XML site map and internal links to crawl your entire site. But this time there is a key distinction: being crawled is just a spider “come through“, indexed is the page into the search engine candidate database. Only be built into the index of the library page, only eligible to participate in the ranking, to obtain natural traffic. Many webmasters see the server logs in the crawl records thought everything was fine, in fact, the page did not enter the index library, which is the direct cause of the search traffic is always zero.

You need to take the initiative to push a hand. Open the Baidu search resource platform, find the “general inclusion“ tool, you can manually submit 25 URLs per day, a single document to support up to 50,000 links, a total of 200 documents can be submitted.Google Search Console also supports active submission: use the “URL check“ function to enter a specific page address, click “request indexing“. URL Check“ function to enter a specific page address, click on “request indexing". This active submission is not a cheat, but rather a clear message to the search engine that "this page is ready to be evaluated", which can significantly reduce the waiting time from crawling to indexing.

服务器稳定性是索引成功的隐形门槛。如果蜘蛛连续三次来访都遇到504错误或连接超时,它会判定这个站点不可靠,减少抓取频次甚至完全放弃抓取。确保你的服务器在线率保持在99.9%以上,页面响应时间控制在3秒以内。如果你使用的是共享虚拟主机,务必检查同IP下是否有其他违规网站被搜索引擎惩罚,这会导致整台服务器的抓取信誉受损,间接影响你的索引效率。

内容原创性决定了能否通过索引筛选。搜索引擎的索引系统会实时对比已收录的内容库,高度同质化的页面即使被抓取,也会在索引环节被过滤掉——搜索引擎不需要重复存储第10001篇“什么是SEO“的基础科普文。你要确保每篇内容都有独特的信息增量:要么提供独家调研数据,要么展示具体实操案例,要么精准回答特定的长尾搜索意图。记住,索引不是奖励,而是搜索引擎认为你有资格参与流量竞争的基础判断。

每天固定时间发布新内容,比三天打鱼两天晒网更能培养蜘蛛的规律性抓取习惯。当你保持稳定更新节奏(比如每天2-3篇),蜘蛛会形成生物钟,在新内容发布后几小时内完成抓取并推进索引流程。反之,这周爆更20篇后停更半个月,蜘蛛无法预测下次该什么时候来访,索引延迟会从几小时拉长到几周,严重影响时效性内容的流量获取。

完成这些动作后,你的页面正式进入搜索引擎的候选数据库,拿到了参与流量竞争的“参赛资格证“。但入库不代表能排到前面——同一关键词下有成千上万个被索引的页面在排队,搜索引擎凭什么把你的内容优先推荐给搜索者?这需要在关键词布局和搜索意图匹配上做更精细的功课。

第三步:关键词布局,从长尾词切入快速起量

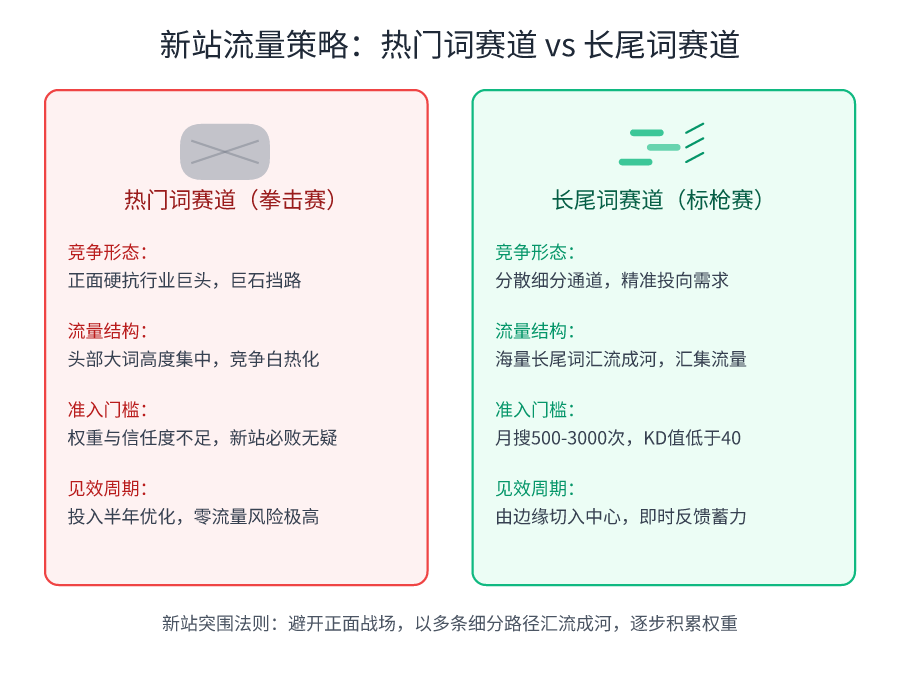

当你的页面成功进入搜索引擎的索引库,意味着你拿到了参与流量竞争的“参赛资格证”。现在,面对同一个热门词下成千上万的“选手”,你该如何设计自己的第一场比赛?直接挑战“拳击赛”(热门行业词)几乎是必败的,但对于一个新站,最务实的起量策略是选择一场“标枪比赛”。你需要找一根属于自己的标枪——也就是那些具体、细分、竞争度低的长尾关键词,精准地投向有明确需求的用户。

新站的流量缺口,长尾词来填补

一个常见的误区是,只有拿下“营销公司”、“装修设计”这类热门词,网站才算成功。事实是,一个新站无论从站内权重还是外部信任度上,都远未达到与行业巨头正面竞争的水平。盲目投入时间优化热门词,只会让你陷入等待半年却毫无流量的挫败感。

你必须看清一个现实:绝大多数自然流量并非来自几个核心大词,而是由海量的长尾关键词汇流而成。对于新站,月搜索量在500至3000次、竞争度(KD值)低于40的长尾词,就是你的最佳起跑线。

不要小看每个长尾词的价值。“普陀区专业营销公司”这个词里,包含了“营销”和“营销公司”两个热门核心词。你围绕这个词创作一篇高质量内容并获取排名,搜索引擎就会逐步给你的网站打上“营销”相关的标签。从长尾词切入,是一个“由边缘切入中心”的权重积累过程,它不耽误你后期竞争热门词,反而能让你的每一步优化都看到具体的流量反馈,建立持续运营的信心。

动手:挖掘你的第一批长尾“标枪”

现在你需要找出这些词。别凭空想象,用工具。对于谷歌优化,主要工具是Google Keyword Planner,它位于Google Ads平台内部。你可以注册一个Google Ads账号(无需充值),利用它的关键词规划师功能。在“发现新关键词”搜索框中,输入你的种子词(比如“营销公司”),工具会返回这个词的海量相关关键词列表,并附上预估月搜索量和竞争程度。

对于百度优化,国内的工具如5118或站长工具是更优选择。它们能提供基于百度搜索数据库的关键词数据,同样会给出搜索量和竞争难易度。记住,我们的筛选标准非常具体:月搜索量500-3000,竞争度低于40。把满足条件的词,全部复制到一个Excel表格里。

🔗 Related resources: Google Ads 关键词规划师

谷歌官方关键词研究工具

🔗 Related resources: 5118数据平台

中文关键词挖掘及SEO数据分析工具

区分流量价值:瞄准“科普词”和“转化词”

并非所有长尾词的商业价值都一样。拿到一批候选词后,你需要将它们分为两类,这决定了你的内容策略和预期目标。

第一类是科普类关键词。比如“如何写一份营销策划案”、“营销方案包括哪些内容”。用户在搜索这类词时,通常处于问题认知或信息收集的早期阶段,购买意图不够明确。为这类词创作内容,核心目标是建立行业权威和信任。这类文章往往会被广泛传播和引用,能给你的网站打下坚实的专业基础,带来稳定的品牌印象积累。

第二类是长尾转化词。比如“营销公司收费标准”、“上海网站建设外包费用”。搜索这类词的背后,是一个需求极其明确的用户。他可能已经有了预算,正在比较不同供应商,离下单只差一步。这类关键词带来的流量往往稀少而精准,但转化率极高。为新站截获这部分流量,是投入产出比最高的选择。

你需要给Excel表格增加一列,将这些长尾词归类。你的内容创作资源,可以按7:3的比例分配:70%用来建立专业形象的科普内容,30%用来精准拦截高转化意图的长尾词。

核心判断:围绕搜索意图,而非堆砌关键词

词找到了,分类也完成了,接下来最容易犯的错误就是“关键词堆砌”。你以为在标题、段落、图片描述里重复出现这个词就能让排名提升,这恰恰是搜索引擎最厌恶的过时做法。2026年的搜索引擎AI,重点评估的是你的页面如何完整且出色地解决用户的“搜索意图”。

用户搜索“营销公司收费标准”是为了什么?不是为了看到一个页面反复出现“收费标准”四个字。他需要的是:一个清晰的价格构成表格(按项目收费还是打包价)、影响定价的核心因素清单(比如市场规模、服务范围)、一个可参考的行业价格区间范围,以及可能存在的附加费用说明。

因此,你的内容创作流程应该是:

- 锁定一个目标长尾词。

- 用一句话写下:搜索这个词的人,他最想解决的核心问题是什么?(这就是搜索意图)

- 围绕这个意图,规划内容的章节。你需要解释概念吗?需要列步骤吗?需要对比不同方案和价格吗?需要给出避坑指南吗?

- 在自然表达的基础上,确保关键词出现在最重要的位置:文章标题(Title)、主标题(H1)、前100字的描述中。其余内容,专注于清晰、完整、有逻辑地回答问题。

当你围绕用户意图产出的内容,其质量自然会超越那些只会堆砌关键词的抄袭页面。搜索引擎的AI会识别出你的页面提供了更专业、更完整的答案,从而给予它更高的初始评分和更好的排名机会。这才是新站能在长尾词赛道中快速突围的真正原因。

在完成一篇长尾词文章后,你可以做一个小测试:遮住文章标题,然后把整篇文章通读一遍。问问自己,这篇文章是否清晰、完整地回答了那个最初写下的搜索意图问题?如果是,那么这篇内容已经具备了在搜索引擎中竞争的基础。如果不是,回去修改,直到它能。

完成了关键词的精准选择与布局,你的新站就拥有了获取第一批真实流量的“弹药”。这些弹药已经按照最有效的顺序装填完毕。接下来要解决的问题是:如何建立一个可持续的发射节奏,以及如何让这些独立的弹药点相互协作,形成越来越强的站内火力网络?这不再是一次瞄准,而是一个持续的作战体系。

内容发布节奏与内链策略

你已经有了关键词方向,也知道该怎么围绕搜索意图写内容。现在最常犯的错误是:要么一口气发布十几篇然后消失几个月,要么想起来就发一片,搜索引擎根本无法在你的网站上形成稳定的抓取规律。

搜索引擎的蜘蛛跟人一样,喜欢“可预期”。你每天固定更新,它就每天来看看;你十天半个月不更新,它来的次数就会减少。规律的更新节奏能让蜘蛛形成“访问生物钟”,这对新站尤为重要——你需要通过稳定的输出频率来换取搜索引擎的信任。

每周2-3篇是最务实的起步节奏

别追求一天写十篇然后自我感动。新站的内容生产能力有限,强行堆量只会导致内容质量下降。正确做法是每周产出2-3篇高质量长尾词内容,严格围绕搜索意图来写。每篇文章控制在800-1500字,把一个问题讲透,比十个问题都讲不清楚强。

如果你能在三周内稳定输出这个量级,搜索引擎会明显提升对你网站的抓取频次。很多新站一个月后蜘蛛访问量能从每天几次增加到几十次,这就是“信任账户”在存款。

设置内部链接,把新内容变成爬行通道

With every new article you publish, you must do one thing: place at least 2-3 internal links to existing content in the new article.

This is the most overlooked, but most rewarding action in onsite optimization. Search engine spiders crawl from page to page on internal links. Every new piece of content you publish opens up a new crawling channel for the spiders. Those old articles that have been published for more than three months may never come back to the spiders without new content linking to them.

Specific operation is very simple: when you write a new article, think back to your site has, the theme is related to the old article, in the appropriate location to insert links. For example, you wrote a “marketing company charges”, you can link to the end of the article previously written “how to write a marketing plan. These two topics are related, the link is very natural.

Shallow structure: keep important content no more than 3 clicks away from the home page

Search engines measure whether a page is important, there is a simple standard: this page from the home page can be reached by clicking a few times. The home page is the first 0 layer, click once to reach the page is the first layer, and so on.

Your core pages - those that carry the most important long-tail keywords - must be sure to be reached in no more than three clicks from the home page. This is not a technical issue, it's a site structure design issue. The most effective thing to do is to put all your core content on level 1 or level 2.

If you find that an important page has a click depth of 4 or even 5 levels, this means that the search engines may not be able to crawl it, or the crawl priority is very low. The solution is simple: add a link to the home page or channel page to “drag” it to a shallower level.

Only one URL for one piece of content, that's the bottom line

Another mistake that many new sites are prone to make is that they produce multiple URL versions of the same page. For example, “https://example.com/page?id=1” and “https://example.com/page/1” can be accessed, the search engine will think that this is two different pages, and the weight is dispersed. Worse, if some external links point to the first URL and some to the second, the weight of your core page will be diluted.

The solution is URL normalization. You need to make sure that there is only one canonical URL per post, and then do 301 redirects on all other variant URLs to point to this canonical URL.If you use a mainstream CMS system, there are usually plugins that can handle this automatically.

Revamp or change domain name, must use 301 redirection

There will come a time when you will be faced with a website revamp or a domain name change. This is the minefield that is most likely to lead to zero weight. The right thing to do is to redirect the URLs of the old pages one by one to the corresponding new pages in a 301 redirect. 301 stands for “permanently moving”, and the search engines will transfer the weight of the old pages to the new pages.

Here is a key requirement: the hopping relationship must be maintained over time. Don't cancel the jump three months after changing domains; many users and search engines still use the old links. Maintain them for at least a year, or until the traffic to the new domain is fully stabilized.

After completing these settings, your site has a healthy “internal circulation system”: regular supply of content, smooth crawling channels, clear weight flow. The next step needs to be resolved is how to verify that the system is really working, whether the traffic is really growing.

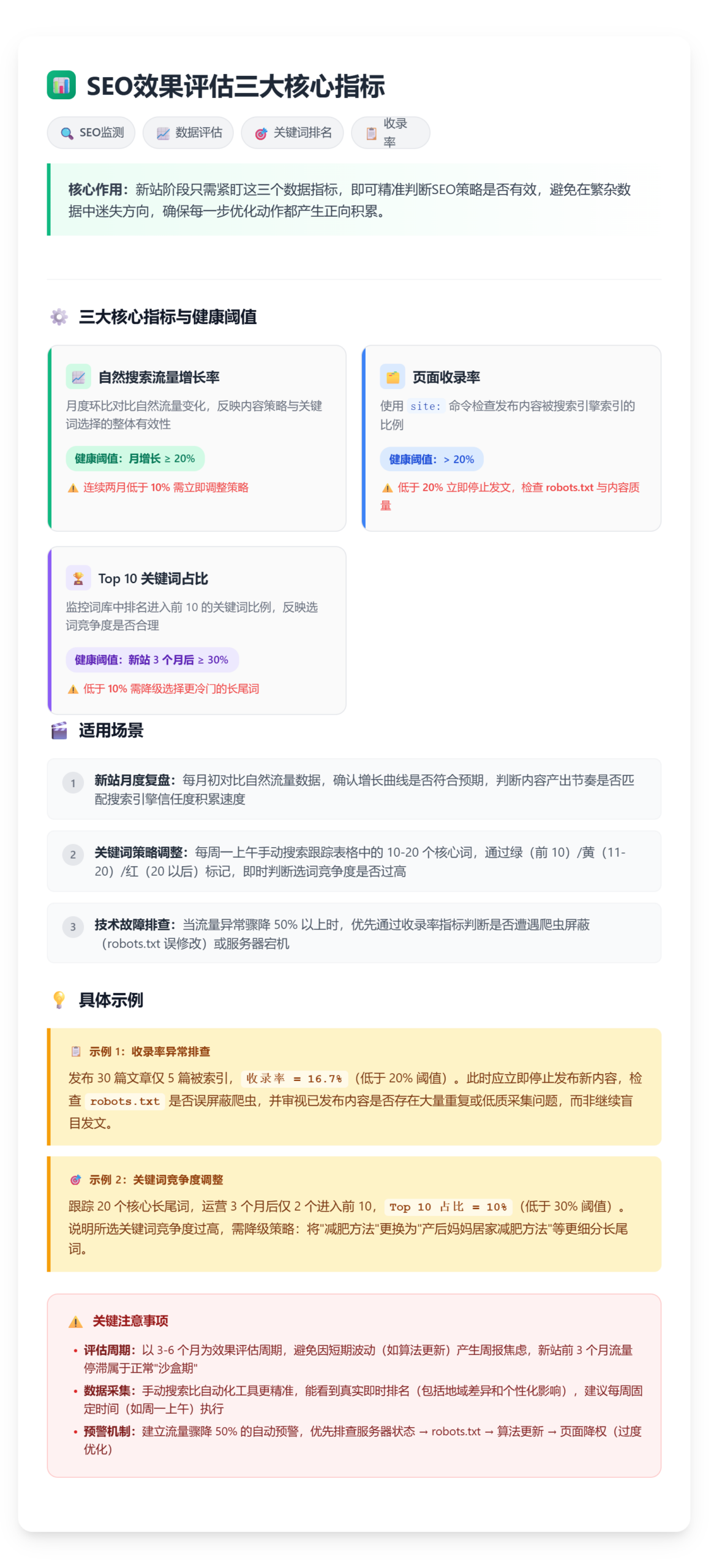

Data monitoring and impact assessment

You've built the technical architecture of your website and established a steady rhythm of content publishing. Now a key question needs to be answered: are these actions really working?SEO can't be judged by feelings, it must be verified with data.

First install the data radar: Baidu Statistics or Google Analytics

First action: Immediately install Baidu Statistics (for domestic sites) or Google Analytics (for international sites) on your website. Both tools are free and sufficient, so don't get hung up on which one to choose at this point.

Once the installation is complete, focus on the 'Natural Traffic' metric. Open the Traffic Sources report and filter out the data from 'Search Engine' or 'Organic Search' sources. This is the direct result of your SEO efforts, and you'll be able to see the real growth curve after excluding distractions such as direct visits, social media leads, and so on.

- Install and properly configure Baidu Statistics (for domestic sites) or Google Analytics (for international sites) for your website.

- Filter and find natural traffic reports from “Search Engine” or “Organic Search” sources in the backend of the statistics tool.

- Create an Excel spreadsheet that lists 10-20 core long-tail keywords horizontally and records rankings vertically on a weekly basis

- Manually search for these keywords on a fixed day of the week and record the site's true ranking in search results

- Color-coded in the table according to weekly rankings (green: top 10, yellow: 11-20, red: after 20)

- At the beginning of each month, calculate the “natural search traffic growth rate” of the previous month and confirm whether it reaches the health standard of 20% or above.

- Monthly use

site:Command to check the total number of pages included in the website and calculate the inclusion rate compared to the total number of published articles - In the keyword tracking table, the percentage of keywords in the top 10 of the total number of monitored terms is calculated once a month (Top 10 keyword percentage)

- Establishment of early warning mechanism: found that the natural flow of a single day plummeted more than 50%, immediately in accordance with the “server / Robots.txt / algorithm update / page downgrade” order of investigation.

- Set a date 3 months from now for performing the first full impact review to assess long-term trends against baseline data

Create a keyword ranking tracking sheet and do weekly checkups

Installing a statistical tool is just a passive way of receiving data, you also need to actively track keyword performance. Create an Excel spreadsheet that lists the 10-20 core long-tail keywords you're optimizing for horizontally, and vertically records the ranking position of each word on a weekly basis.

On a fixed day of the week (e.g. every Monday morning), manually search for these keywords and record where your site ranks. If ranked in the top 10, marked as green; if in the 11-20 position, marked as yellow; after 20 positions marked as red.

This action may seem primitive, but it's more reliable than any automated tool. That's because automated tools tend to grab cached data, whereas manual searches see real, instant rankings, including geographic variations and personalized influences.

Keep an eye on these three core indicators, and don't look at the rest just yet

Too much data is rather confusing. There are only three metrics to focus on in the new site phase:

- Natural search traffic growth rate: Compared to last month, did natural traffic grow by more than 20%? If the growth is less than 10% for two consecutive months, it means that there is something wrong with your content strategy or keyword selection.

- Page indexing rate: Use the site: command to check how many of the articles you posted were indexed. If you have posted 30 articles, indexed only 5, the inclusion rate is lower than 20%, immediately turn back to check robots.txt settings and content quality, rather than continue to blindly post articles.

- Percentage of Top 10 Keywords: In your tracking table, the percentage of keywords that make it into the top 10 out of the total number of monitored words. Three months after the new site, this ratio should reach 30% or more. If it is always lower than 10%, it means that the competition of your long-tail keywords is chosen too high, and you need to downgrade for colder words.

Setting up a flow warning mechanism and immediate troubleshooting in case of a sudden drop

If one day you find that natural traffic has plummeted 50% or more from the day before, don't panic and troubleshoot in this order:

First check if the website can be opened normally and if the server is down. Spiders give up crawling usually because of several consecutive access failures. If the server is normal, check whether robots.txt has been modified by mistake, there is no sudden ban on all crawlers.

If the technical level are no problem, search for "Baidu Algorithm Update" or "Google Algorithm Update", to see whether it happens to meet the search engine large-scale adjustment of the ranking rules. 2026 algorithm updates are more and more frequent, the new station was mistakenly injured from time to time, usually 2-3 weeks after the natural recovery.

If technical issues and algorithm updates are ruled out, it could be that one of your core pages has been demoted. Check for signs of over-optimization or keyword stacking on pages that have recently modified their titles or content.

Evaluate results on a 3-6 month cycle and reject weekly report anxiety

SEO is a game that requires patience. Don't stare at the data every day, and don't overrule your entire strategy just because of a week's fluctuation in rankings. Set a hard and fast rule: do a full review every three months and compare the baseline data from three months ago to see if the natural traffic has doubled and to see how many long-tail terms have made it into the top 10.

If there is no significant increase in natural traffic in three months, but there is no drop either, this is actually good news - it means that your website has passed the 'sandbox period' and the search engines are starting to trust you. Increase your content output over the next three months and traffic will usually explode.

If six months have passed and natural traffic is still stuck in single digits, that's a real red light warning. That's when you need to revisit your keyword strategy or consider leveraging a third-party platform to break through the bottleneck.

🔑 critical: Don't rock the boat with your strategy because of data fluctuations during the week. Evaluate the overall trend on a 3-6 month cycle to determine if you're headed in the right direction. If there is no significant drop in traffic but slow growth in 3 months, it usually means that the site has passed a period of stabilization, at which point you should maintain or even increase your investment instead of doubting the strategy.

Data monitoring isn't about creating anxiety, it's about confirming that you're generating positive accumulation with every action you take. When data begins to grow steadily, you have the backbone to continue expanding; when data stagnates, you have a clear direction to troubleshoot.

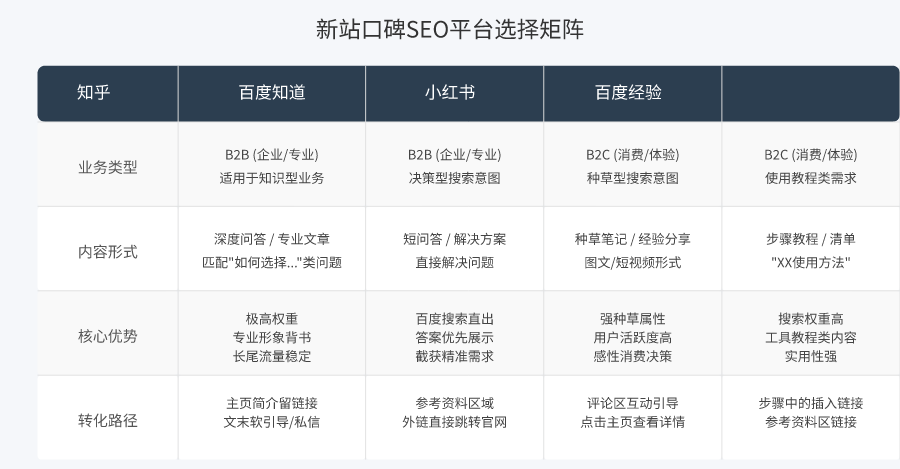

When the weight of the official website is insufficient: leverage the third-party platform to quickly start volume

When the traffic curve continues to go sideways, optimizing the details of the station is usually not helpful. Data monitoring has told you: natural traffic growth rate for a long time below 10%, Top 10 keywords stagnant in the single digits - this is a typical bottleneck signal of the lack of weight of the new site. At this time you need to jump out of the official website, expand the battlefield to the search engine results page itself, go to those high-powered platforms to intercept traffic directly.

Forget about external link building for a moment and focus on word-of-mouth presentation in search results

In the past decade, the core of SEO is “content + external links”. But in 2026, a large number of low-quality external links have been Baidu, Google's algorithm directly ignored. The new station spends a lot of money to buy or exchange to the external links, 90% will not produce any weight gain, but may trigger the anti-cheating mechanism of the cordon sanitaire.

Don't focus on ineffective outbound links. The correct off-site optimization is to shift the focus to “word of mouth SEO” or “SWOM” (Search Word of Mouth). Its core goal is: when users search for your brand words, industry keywords, the first screen content presented on the search results page, is it favorable to you? Can it bring visitors directly?

The new station has low weight and can't squeeze into the top ten on popular words. However, Baidu Know, Baidu Experience, Zhihu, Xiaohongshu, B station, these platforms have extremely high weight themselves, and their content is inherently ranked in front of your official website. Word of mouth SEO is to take the initiative to create content on these platforms, and take the traffic that originally belonged to them and convert it into your potential customers.

Step 1: Choose high-powered platforms that are relevant to your business

Not all third-party platforms are worth investing in. Prioritize platforms that match “search intent” and have a long retention period.

For B2B and professional knowledge business, Zhihu and Baidu Know are preferred. Users search for “how to choose CNC machine tools” and “which ERP system is good” on Zhihu, with clear learning and decision-making intentions. A professional, detailed answer can directly build trust and bring high intention to consult.

For B2C and consumer experience business, Xiaohongshu and Baidu experience are preferred. Users searching for “XX brand reviews” and “XX product usage” are looking for real user experience. A graphic “grass” notes or step-by-step tutorials, the conversion efficiency is much higher than a dry product introduction page on your official website.

Step 2: Create “problem-solving” content around user search terms

Don't use these platforms as billboards for hard copy. Your goal is to intercept search traffic, and the premise of interception is that the content must accurately match the user's “search intent”.

Specific operation: open Baidu know or Zhihu, search for your selected long-tail keywords. See what the top five ranked questions are and what are the shortcomings of the existing answers below. Then, create a new answer or article that addresses that question thoroughly.

Take the keyword “slow opening speed of WordPress website” as an example. In the know, you can publish a detailed tutorial, titled “tested effective: 3 steps to make your WordPress site loading speed from 5 seconds down to 1 second”, the content of the graphic explains how to optimize the picture, how to choose the cache plugin, how to detect the server response time. At the end of the article, you can naturally lead: "If you are not familiar with the server configuration, you can also private message me to get a one-click optimization script", or with a link to a more in-depth technical article on your official website.

Remember, the value of content always comes first. The quality of your content on third-party platforms directly determines whether a user will click on your homepage or convert into your customer. A perfunctory answer, even if the platform is highly weighted, will not bring effective traffic.

💡 tipThe “content refresh“ action will trigger the platform's algorithm to re-recommend your answers to the top of the search results, and continue to intercept the precise traffic.

Step 3: Reasonable layout of brand keywords and conversion portal

There are two core ways to play word-of-mouth SEO, and you should do both:

- Intercept industry demand keywords: Create solutions for long-tail problem words and tutorial words related to your business, with the goal of getting accurate traffic that is not available to your official website at this stage.

- Defense of brand word-of-mouth positions: Post positive, professional introductory content under the search term of your company or brand name in advance to prevent negative information or gaps, as well as to take on users who are searching directly for your brand.

Embedding conversion portals in your content should be extremely restrained. Zhihu does not allow URL links in the body of the answer, but you can: in the profile of your personal home page, clearly write out your official website and business direction; at the end of the answer, use “to learn more you can click on my home page to see the column articles” such soft guidance. Baidu knows that you are allowed to add links in the “References” area, make sure that the link is to the official content page that is highly relevant to your answer, not the home page.

How are results tracked? Use the data to speak for yourself.

Continue to use your keyword rank tracking table, but add a column for “third-party platform rankings”. Each week, manually search for your core terms and note where your Zhihu answers and Baidu Know answers rank. At the same time, set up a special “channel source” flag in Baidu statistics for your homepage or lead links left on third-party platforms.

Soon you will see the change: some of your official website articles how can not go to the top 20 keywords, your Zhihu answer may be steadily ranked in the 3rd place. And through this answer to bring visitors, its consulting conversion rate is often much higher than the general search traffic, because these users have been through your professional answer to complete the initial trust establishment.

When your official website gradually accumulates weight through on-site optimization, the quality content of these third-party platforms will become a stable source of auxiliary traffic and brand endorsement. Even if your official website rankings come up in the future, they will exist in the search results for a long time, forming a three-dimensional encirclement of your brand.

The core of this road is that it bypasses the long cycle of weight accumulation of the new site, directly in the search results page of the “high ground” to establish a stronghold. It is not a replacement for your official website SEO, but in your weakest offensive phase, to provide you with the most effective fire support.

Start Now: Your Three-Week Execution Checklist

With both onsite optimization and offsite borrowing understood, what you need now is a plan of action that you can execute immediately. Not next week, not tomorrow, but now.

Don't think of doing it all in one breath.SEO is a long war, but the most important thing in the starting stage is to run through the smallest closed loop first. Split three months of work into three weeks, each week only focus on one thing, the rhythm is more stable.

Week 1: Putting the technical foundations in place

Only four things to do this week, one check mark after each one.

First, create an XML sitemap. List all the important pages of your website and submit them to Baidu Search Console and Google Search Console, which is the first step to let search engines know “you exist”.

Check robots.txt again. make sure that there is no mistakenly blocked out the page you want to be included, many people have turned over on this, the site is online for a few months spiders simply can not get in.

Then optimize the URL structure. Simply put, use static URLs, use lowercase letters, separate words with short horizontal lines, and keep the hierarchy to three layers or less.

Finally loaded with statistical tools. Baidu statistics or Google Analytics loaded, can see the traffic data you know the back there is no effect.

Week 2: Finding Your First Keywords

Focus on one thing this week: digging for words.

Use Google Keyword Planner, 5118 or Baidu index, dig 50 long-tail keywords related to business. The search volume doesn't have to be high, 500-3000 will do, and the competition is lower than 40 priority.

After you've done your digging, sift through it, pick the 10 most likely words to bring in traffic, and plan the corresponding content pages around them. Words first, then content, don't get the order wrong.

Week 3: Start producing content

Starting this week, publish 2-3 pieces of high-quality original content per week. Remember two actions: set up 1-2 internal links per article to previously existing content; and submit the URL to search engines after each piece of content is complete.

This is your minimal closed loop. Run through it once, and the rest is repetition and fine-tuning.

A final mental note.

SEO results take 3-6 months to be noticeable, don't Weekly any data and get so anxious you can't sleep. What you need to do now is to check the box on a weekly basis, and after three weeks, the foundation is set. Subsequent rankings and traffic grow naturally on this process.

Start now, don't wait for the perfect plan.