Three Months of Anxiety Misunderstanding

That problem starts to surface from the 90th day after you build your site - every day you open Google Search Console and see the indexing slowly creeping up, sporadic long-tail terms scattered on the second and third pages of the results page, but the core business terms are still sinking like a stone in the sea. Your heart begins to drum: “Didn't they all say that SEO works in three months?”

I'm telling you, every client asks the exact same thing at the three-month mark. But the real problem is that the “three month results” claim is itself a huge industry hackneyed phrase full of contextual misunderstandings.

Where did that come from?

The “3 to 6 months” you hear about comes from a simplified spread of idealized timelines for “pure technical debt clearing + basic content filling + a few outbound links”. It started as a baseline for service providers to give to new B2B business websites (typically in industries with moderate competition, clear themes, and relatively easy-to-create content).

But this baseline has a fatal premise: it defaults to your site already has some of the search engine's “basic good sense”. For example, the server is stable, HTTPS deployment is complete, mobile adaptation is not a problem, no penalty history - these “technical pass mark” is not from scratch, but in the project should have been met at the start of the standard.

I've seen too many cases, the so-called “three months to start the day” is actually calculated this way: the day of signing the contract to start counting, the first week of communication, the second week out of the program, the third week before the start of the real change of the site. Server migration dragged a week, found that the old domain name has a bunch of junk links, clean up and spent two weeks. By the time all the technical debt is cleared, the actual implementation of the project may be the “starting point” has been moved back 30 to 40 days.

So when you ask me “how come it hasn't worked in three months”, the first question I always ask you is: when did your “sandbox period” actually start? Was it the day we submitted the first technical audit report, or was it the day you signed the contract?

“Effective” is not the same as “profitable”.”

This is the second and more insidious misunderstanding. The “effect” in the mouth of the customer is usually “inquiries”, “orders”. But the search engine gives you the first “effect” signal, not at all this is the case.

In Google's eyes, it has to first determine whether you are a “normal” site. This process, we internally called “trust transfer”. First you have to beindexingThen there are some words (even if it is very biased long-tail words) can be displayed, and then these displays to be someone point (even if the click rate is only 2%), pointing out the people can not close the page in seconds. Completion of this set of actions, Google's algorithm will be like observing a new employee, give you a little positive feedback: “Well, this station seems to be okay.”

So there are really two milestones:

- begin to show results: Usually means that the “number of ranked keywords” starts to grow steadily, from 0 to 100 to 500. Traffic may be a dozen or so per day, but the source is clear and traceable. This often happens in the first 2-4 months.

- Meeting business objectives: The core words into the first two pages, or even the first page, brand words began to be actively searched, the traffic converted into the first effective inquiry. This is in the medium competition industry, usually takes more than 6 months.

Putting the expectation of a “business goal” on a timeline to “start delivering results” is a source of anxiety for 99%. You think it's time to reel in the nets after three months, but in fact your boat has just left the harbor and you haven't even detected where the fish are yet.

The biggest pitfall: timing starting points are misplaced

One of the worst cognitive traps is to subconsciously think of “the day we decided to do SEO” as a red mark on the calendar and count the days.

Search engines don't recognize this.

It recognizes only two things:

- Your websiteOverall mass fractionWhen has a qualitative improvement occurred? (e.g., HTTPS-enabled site-wide, core page speeds up to standard, high-quality original content coming online in bulk)

- This elevatedsustainabilityHow long did it stay up? (You make 10 updates today and then disappear for a month, which is the same as not doing it)

Let me give you a real life example. Last year a client that makes industrial equipment parts had a website with a history of okay content but a technical mess. We spent 6 weeks doing technical reconstruction, during which the site can be accessed but the experience is not stable. These 6 weeks, in the eyes of search engines, may be a “fluctuation period”, even slightly negative. Until the 7th week, the stable version on-line, along with 3 articles per week of in-depth industry content, at this time, “trust accumulation” of the hourglass began to formally backflow. You say, this project's “three months” should be counted from which week?

So, if you're in the midst of “three month anxiety” right now, don't ask why you're not ranking. Take out your Google Search Console and ask yourself three more specific questions:

First, is the indexing status of my site's core pages consistently “valid”?

Second, is the total number of “ranked keywords” flat over the last 90 days, or is it creeping upward at even a torrid pace of 5-10 per week?

Third, is there even a single long-tail term that ranked from outside the 100s and crept into the top 50?

🔑 critical: three months on the node, really should not look at the ranking, but the “ranking trend“ - even if only 5-10 new long-tail words into the list every week, or a word from 100 + climb into the top 50, are that the search engine has marked you as a “normal site“, trust transfer is happening. “normal site“, trust transfer is happening. Most people are anxious because the end of the “business inquiries“ is expected, the wrong set of "technical trust" at the beginning of the stage.

If you answered “yes” to even one of these questions, your SEO is not ineffective, it's on its way to becoming effective. It's just that the length of that road may have been grossly underestimated by initial false expectations.

In our industry, we often laugh at ourselves, SEO is a “patience for weight” business. In the next chapter, I'll take you into the search engine's account, to see how every change you submit is slowly registered and checked in its ancient and careful bookkeeping system, before it affects the ranking figures you've been watching day and night. You will realize that slow is not a bug, but a feature of the system.

The Slow Ledger of Search Engines

In the last chapter I left you with a question: what you think is three months of results, and what the search engines think is three months, are probably not the same thing. What I'm going to show you now is why it's so slow.

A search engine is not a plug-and-play machine, but rather a slow bookkeeping system.

You submit a change and it doesn't immediately reflect in the rankings. This is not because it is arrogant, but because behind every action there is a whole validation process. I call this process “slow book” - every optimization you do is just an entry in the book, but when this account is nuclear, how to calculate, and ultimately affect which number, it says.

There are four stages to this process.

Phase 1: Crawler discovery. Google's crawler (Spider) has to skim across the web like a scanner to find you. Instead of staring at your site every day, it follows a set of prioritization algorithms and decides who to crawl first and who to crawl after in trillions of pages. A site that has just gone live is a blank sheet of paper to the crawler - it doesn't know you exist. This means that it could be days or weeks between the time you go live and the time it first sets foot on your site.

Phase 2: Crawl. The crawler is here, but it's not here for tea. It wants to download the HTML code of your page. This process seems simple, but it can get stuck if your server response is slower than two seconds, if the JS rendering layer is too thick (it can't read it), if there are a lot of dead links or if the redirection chain is too long. A crawler's resources are limited, and how long it stays on your site is up to you to make it “worth it”!”

Stage 3: Indexing Inclusion. Catching you is not the end of the story, Google has to put you into its indexing database before it recognizes “you exist”. The average turnaround time for this is 2-4 weeks, but it's highly variable. If you are using a pure JS rendering framework (such as some SPA architecture), it may catch an empty shell - because Google can handle JavaScript, but requires additional time and computing resources. This process is called “rendering latency” in the industry, and the median time is really about 5 seconds as Google officially says, but in reality it can take weeks to “catch up”.

Phase 4: Ranking. Indexing only qualifies you to compete, it's the subsequent transfer of trust that really affects rankings. Do you think indexing is the end of the story? No, that's just the beginning.Google wants validation signals from users - did someone search for the term? Has your page been clicked? How long did they stay? Did anyone come back to search for your brand name?

After these four stages, a few months have already passed. That is why I say that “slowness is not a fault, it is a mechanism”.

Now to the most sensitive topic for new sites: the sandbox period.

Many customers hear the word “sandbox” on the explosion, think Google is punishing him. In fact, the sandbox period is not a punishment, but a data observation period. Google face a new domain name, like hr face a freshman resume without work experience - you are not a bad student, but you need to prove that you are not to make trouble. It will limit the exposure of the new site in certain high competition words, to give you the time to prove yourself. The general consensus in the industry is that this observation period is between 1 and 3 months, longer in certain industries.

But the sandbox period has one key feature: it's not sensitive to different types of keywords. Long-tail terms tend to be unaffected, and you may have started ranking for some remote terms that you just didn't notice yourself. Core business terms, on the other hand, will be held down, and once the sandbox passes, rankings may suddenly loosen. This is a sign - your trust score is quietly building up while you're under observation.

There is another kind of slowness, the technical debt you owe yourself.

I've seen too many websites with well-written articles that search engines simply can't read. There are just three reasons for this:

JS rendering lag. You use React or Vue to make a single page application, the page content is dynamically generated, the first time the crawler comes to crawl, may only see an empty div. Google can now handle JS, but it needs to “render” this action, this process has a delay, there is a failure rate. If your key content all rely on JS output, do not blame the slow index.

Mobile content is missing. Google has been practicing mobile-first indexing for years, and it looks primarily at your mobile pages. If your mobile version has less content than your desktop version - and many sites cut out important mobile content to “speed up loading” - then its readability in Google's eyes is directly in Google's eyes, then its readability is directly discounted.

URL normalization is confusing. The same content, some people are visiting, some people are visiting, and some people are visiting - these three in Google's eyes are three different pages. It can not determine which is the “room”, so simply do not give weight. www and non-www is not unified, with parameters of the dynamic URL and static URL mix, these are chronic poison.

These technical debts will not make the site completely disappear, but it will make the whole chain of “crawler discovery → crawling → indexing” drag on for weeks or even months. You think you and other stations in the same starting line, in fact, you are carrying a sandbag.

Understand this set of “slow accounting” logic, you should understand: SEO time cost is not spent on “waiting for the ranking”, but spent on “going through the process”. Each optimization is just bookkeeping, write-off takes time.

The next chapter I will tell you, why the same set of processes three months, some stations have begun to inquiry, some stations are not even included in the completion of the - the difference between, not in luck, but in the foundation of your site. Next time you stare at the ranking anxiety, perhaps you can first ask yourself: my technical debt is not finished?

Who the hell is your website?

The last chapter I talked about the search engine “slow book“ mechanism - the set of crawlers found, crawling, indexing, ranking time process. Now you should ask: Since we have to go through this process, why some stations in three months to start inquiries, some stations are not even included in the completion?

The answer is simple: you're not starting from the same place.

I have seen too many customers take “SEO need 3-6 months to see the effect“ this sentence to question me, but never thought - this “3-6 months“ is calculated according to who? Is it according to a new site just starting from scratch, or according to an old site that has been operating for three years? This is like asking “how long does it take to get to Beijing from your hometown“, someone from Hebei, someone from Hainan, the answer can be the same?

There are actually three invisible starting lines in this industry, and most people have no idea which one they're on.

First line: new station, really starting from scratch. Your domain name has just been registered, your server has just been set up, and your Google Search Console is a blank slate. I give you a real expectation: 3-6 months to start, and the first three months is basically a “silent period“. I'm not trying to scare you, but Google really doesn't know you. The sandbox period (mentioned in the previous chapter) will limit your exposure to your core terms, but the long-tail terms may already start to rank sporadically - you just don't notice it because the traffic is still in the single digits.

I have seen the most typical situation is: an industrial parts of the new station, on-line for two months, the GSC shows that there are 80 keywords into the top 100, but very long-tailed words, “a certain type of stainless steel bearings size specifications,” such. Customers anxious, said “how no effect“, in fact, he has been indexed, just do not know where to look. Sixth month, the core word suddenly into the top 50, inquiries began to come in. This is the rhythm of the new station - first sound, then sound.

The second line: small, basic stations, which are the easiest category to misjudge. The site has been online for a year or two, there are dozens of pages indexed, occasionally a bit of natural traffic, but the ranking is not stable, and the conversion is sparse. This kind of station if the right strategy, 1-3 months can see obvious changes.

But the “right strategy“ has a premise: you have to diagnose why it did not get up before. I have seen too many “small foundation“ of the station, the foundation is all false - content copy of the peer, the chain is all friend chain exchange, the technical level is a mess. This station is not “optimization“, is “reconstruction“. The real “small foundation“ refers to: a certain accumulation of original content, indexing clean, did not eat the punishment. Meet these three, optimization will be much faster, because Google already know you, just do not trust you to the extent that you give you a good position.

The third line: mature old sites, where changes can be perceived in a few weeks to a month. This kind of station usually has more than three or five years of continuous operation, domain name weight accumulation in place, brand words have a certain search share. You add a page, change a title, optimize the speed, rankings may have feedback in a few weeks.

But there's a hidden trap at the old station:Domain age does not equal domain trust. I have seen the old domain name registered in 2008, the middle of the deserted eight years, content deletion, the server has changed three sets, last year to pick up to do a new site - Google put it as a new site to see, the sandbox period as a full set of go. GSC in the display of the “date of the first crawl GSC shows “first crawl date" is 2024, not 2008. The real signal behind the age of the domain name isOngoing operational traces, not the year of registration.

Here's a harsh comparison:

- A domain name registered in 2015 and continuously updated with industrial blogs, a new e-commerce section was opened late last year, and the core terms were on the first page for two months.

- A domain registered in 2010 with a five-year hiatus in between and relaunched this year, the new section hasn't even been out of the sandbox for six months.

Search engines do not look at you “how long“, it looks at you “recently live or not live“. Abandoned rebirth is the illusion of many old site owners, thought to pick up an old domain name will be able to take a shortcut, in fact, is carrying the burden of history to climb the slope again.

Can old stations really be accelerated? Yes, but there are three hidden conditions that are missing.

First, historical penalties must be cleared. This is the easiest to overlook. Having used black hat tactics, bought spammy outbound links, been misplaced by an algorithm update a few years ago - these records don't automatically disappear. You have to go to the GSC first to see: have you ever received a manual action notification? Is there any record of sudden traffic cliff? If there is, you must first clean up, and then appeal, this process may take two or three months. Without a clean old site, optimization is running in the swamp.

Second, industry relevance cannot be broken. The old site was originally mechanical equipment, and now suddenly changed to beauty e-commerce - domain weight will not be automatically migrated. Google is looking at “what this domain name has consistently proved in the past“. Google looks at "what this domain has consistently proven in the past". Rebooting across industries, the advantage of the old site will be drastically reduced, even worse than a clean new site.

Third, the content base should be complete. I've seen old site owners confident: “I have 5,000 historical articles.” When I clicked in, half of them were product parameter pages from 2008, with invalid images and dead links, that's not content, that's liability. content base integrity means: there is continuously updated content active in the index, there is a clear clustering of topics, and there is user evaluation or interaction data. These are the chips that Google is willing to accelerate trust in you.

| Site type | Expected time to effect | core bottleneck | Hidden advantages | Key diagnostic indicators |

|---|---|---|---|---|

| new station | 3-6 months to start, with the first three months as a “quiet period” | Google's trust “sandbox period”, the core word exposure is restricted | No historical baggage, freedom of structural design | Index inclusion (0-dozens), brand term search share (basically 0), whether the long-tail terms are in the top 20 |

| small basic station | Significant changes can be seen in 1-3 months if the right strategy is followed. | Need to diagnose first to remove the real reasons for the lack of momentum in the early stages (e.g., plagiarized content/spammy outbound links/technical debt) | Google has recognized this domain name, optimization feedback faster than the new site | Indexing volume (several hundred to one or two thousand), stable rankings of long-tailed words, original content accumulation |

| Mature Old Stations | Optimization changes can be perceived in a few weeks to a month | Historical penalties are not cleared or industry relevance is broken, leading to misjudged advantages | Domain weight accumulation is in place and brand terms have search share | Indexed inclusion (usually 5,000+), brand term display/click trends, content base integrity |

Now you can position yourself. Open the GSC and look at these three numbers:

- Index included: the new station is usually 0 to two digits, a small basic station three digits to one or two thousand, the old station is usually more than five thousand.

- Brand term search share: Search your brand name in GSC's “Query“ to see the display volume and click trend. New site is basically zero, old site will have a stable curve.

- Long-tail word ratio: the core word is not ranked normal, see if there is a long-tail word has entered the top 20 - this is the earliest positive signals of the new station, but also to determine the “small foundation“ station is really the key to health.

These three numbers tell you more about your domain name than the year it was registered.

Choosing the wrong starting point for yourself is the first step in the failure of many SEO programs. New station owners impatient, take the old station's timetable to demand their own, three months no ranking on the change of service providers, the results will always be in the “restart - give up - restart“ in the cycle. The old station owner overconfident, think drag a few months will be able to natural up, the result of technical debt more and more owe more and more, the advantage of dragging out.

The easiest thing to do in this industry is to start with a “small foundation“ - if you're really in line 2 but haven't made a move in three months, it's often not a question of patience, it's a question of direction. Is the content really addressing the search intent? Are the outbound links delivering domain weight? How far is the technical debt cleared?

More important than “how long“ is “what am I waiting for“. New sites wait for the base plate of indexing, small established sites wait for the inflection point of trust upgrade, and old sites wait for the formation of competitive barriers. Each type of site has its own milestones, the next chapter I want to tell you how these milestones are accelerated or slowed down by keyword strategy - you choose the words, whether it is a shortcut or a detour, the difference may be greater than you think.

Choose the wrong word and the time is wasted.

Figuring out which starting line your site is on is just the first dimension of time management. The next step is the real test of your understanding of the bottom of the search engine cards - choose what words to play. Choose the right words, your time budget can be cut in half; choose the wrong words, every step forward is a waste of time.

I have seen too many customers come to me with a list of so-called “core words”, all of which are “servers”, “ERP software”, “Industrial Bearings” and other big words. I ask them: how long do you expect to do these words? They usually refer to some documents circulating on the Internet and say 6-12 months. I just laugh when I hear this.

6 to 12 months? That's the standard set for big players with massive budgets and content teams. It's a time trap for most businesses.

Let's look at a real comparison case. Last year, one of my clients, a small brand doing industrial sensors, had a limited budget in hand but needed inquiries urgently.

- long tail word strategy groupThe search terms “automotive production line temperature sensor”, “CNC machine tool vibration monitoring probe”, “industrial oven humidity sensor”. Each term has a monthly search volume of 150-300, and the search intent is very clear - to find products. We used two months to produce 15 in-depth solution articles for these terms. By the end of the third month, 5 related long-tail terms entered the first page of Baidu, and the inquiries from these terms were 7 in the same month.

- Core word fantasy group: “Sensors”, “Industrial Sensors”. The customer originally also wanted to do, at my insistence only a symbolic two pages. A year has passed, these two words are still stuck in the search results of the fifth page, did not bring any conversion.

That's the first cruel law:At the heart of word choice, it's not about picking the most searched for words, it's about picking the ones you can beat in a limited amount of time. A search volume of tens of thousands of “core words”, the first 3 pages may be lying in the industry giants, Baidu encyclopedia, a variety of vertical media evaluation - new station to take what to squeeze? Time? The giant's time reserve is much longer than you.

I often tell customers a simple conversion rule: you do a “core word” time and energy, enough to do 20 accurate long-tail words. The former may still be struggling after a year, the latter may have been able to stabilize the output of 10% sales after three months.time rate of return, that's the indicator you should be keeping an eye on.

| Keyword Type | Typical Search Volume | degree of competition | Expected time to impact (moderately competitive industries) | Traffic Accuracy | Business value point in time |

|---|---|---|---|---|---|

| core word | Tens of thousands of searches (e.g. “sensors“, “industrial sensors”) | The first 3 pages lying industry giants, Baidu encyclopedia, a variety of vertical media evaluation, new sites are difficult to squeeze into the | 6-12 months to grind to the bottom, or even a year later may still be stuck on the fifth page of search results | Generalized, with fragmented search intent | Distant, no conversion in the short term |

| long-tailed word | Monthly search volume 150-300 (e.g. “automotive production line temperature sensors“) | Relatively little competition, with generally average depth of rival content and experience | 2-3 months to see the results, the case of five long-tail words three months into the first page | Very high, the search intent is very clear - it is to find products | Short, potentially stabilized output of 10% sales after three months |

But even after picking long-tail words, another misconception follows and kills your time gain - the “wrong word”.

I've seen too many cases where the rankings went up, the traffic came, but the conversion rate was zero. The problem lies in the search intent of the “layer”. For example, “CRM system how much money” this word, at first glance is to find a quote. But the people searching for this word, some people have just heard of CRM want to understand the approximate price (cognitive layer), some people have done the research ready to enter the procurement stage (decision-making layer).

If your page is a pure feature comparison and price list, it's a perfect match for the decision makers, but it will scare away the cognitive users - they'll think “it's too complicated, I'll look at it again”. Conversely, if your article is a “Beginner's Guide to the Major CRM Systems of 2026”, it appeals to the cognitive level, but doesn't meet the decision level's immediate need for a quote.

Search engine is very smart, it is now not just look at your page there is no such word, it is more to see the user point you after the behavior. If a large number of users searching for “CRM system how much money”, point your “Getting Started Guide”, and then 3 seconds on the exit (high bounce rate), then Google will think “this page does not meet the intent of the search ”Next time slowly will not give you row.

You've worked hard for three months to optimize, and then you end up “optimizing” away your rankings because of intent mismatch. Time just thrown away for nothing.

How do you determine the potential intent of a term? Don't just rely on tools, you have to see for yourself what is in the first 10 pages of search results. If the first ones are “buyer's guide”, “top 10 brands comparison”, “latest offer”, then the intent is biased towards decision making. If it's all “what it is”, “how to use it”, “how to get started”, then the intent is biased towards cognition. Your page type must match the dominant pattern of search results, which is a safe “time investment” bottom line.

Speaking of tools, another one of the most common miscalculations is: putting too much faith in the KD values given by keyword difficulty tools.

The difficulty scores of all third-party tools are essentially based on a calculation model: a combination of backlinks and page characteristics such as the number of outbound links, domain weight, and page content length. It tells you that the term is highly competitive because the pages in front of it have a lot of outbound links.

But, this is only one dimension of competition, and often not the decisive one.The dimensions of competition that tools don't see are precisely where new or small sites have the best chance of breaking through.

For example.Depth of content. Many of the top ranking pages do have a lot of outbound links, but the content may have been written five years ago and the information is seriously outdated. If you can use the data of 2026, combined with the latest industry policies, to make a more comprehensive and in-depth answer, you are likely to surpass it without the same amount of external links. This process does not require “12 months to accumulate links”, may only need “1 month to write a really good content”.

Another example.Page Experience. If the pages in the front row are slow to load (more than 3 seconds) and poorly adapted for mobile, this is your opportunity for technical optimization. You get the speed to within 1 second, make a good AMP or MIP modification, and the search engines may accelerate your rankings based on user experience signals. This is much faster than if you go and slowly save up your external links.

A tool's KDs tell you about the “current static competitive landscape”, whereas in the dynamic game of search rankings.Outdated content and a poor experience are the fastest gaps in the walls you can attack. Staring at the gap and hitting it saves you months of time over going head to head.

So, when you choose your words, you have to add a couple more actions besides looking at search volume and difficulty:

- Manually go through the first 3 pages of results: A quick assessment, are there any rivals that you can significantly outperform (old content, poor experience, incomplete information)?

- Predicting the shape of your content: Is the content you plan to make consistent with the dominant shape of the search results (be it an article, product page, Q&A or video)? Be safe if it's consistent, be cautious if it's not, you may need to educate the market and change user habits - which costs extra time.

- Calculate your resource inputsThe word needs you to do a 3000-word in-depth analysis, or just need to update a product parameter page? The former brings not only rankings, but also the long-term accumulation of domain name authority, the return on investment in time is more long-term; the latter may be fast, but it is easy to fall into the “island page”, can not drive the weight of the whole station.

Stop asking childish questions like “How long will it take for this word to go up?”. Ask something more realistic:

“With my current station status and content capacity.The return on investment cycle of doing this wordHow much is it?”

“When the word comes up in the rankings, it canDirectly bring in inquiries, or just bringing in traffic?”

“Aside from the difficulty of the word itself, I had to do it in order toHow much extra time is spent to make up for shortcomings in content or experience?”

Word choice is never a static preparation. It is a dynamicTime Resource Allocation Strategy. Are you betting your limited time, energy, and budget on a distant but ambitious goal, or are you diversifying your investment in a series of precise battles that will pay off in the short term?

Those who gave up before seeing results in three months mostly chose the wrong battlefield, using physical combat to fight an offensive battle that required artillery support.

And some people even pick the wrong battleground - they're in an industry where some tracks are just slow burners and some just have natural competitive barriers. It's not something you can fix by changing the words. It's in the DNA of your business.

Some tracks are naturally slow.

But at the end of the day, word choice is the most important of the controllable variables, but it's not the whole story. I've seen too many people think “pick the right words and you'll win” as a lifesaver, only to find out after three months of trying their hardest that--There are some tracks you can't run even if you choose the right words. It's not an excuse, it's reality.

A customer doing local locksmith services, selected long-tailed words can not be more accurate - “24-hour locksmith in Chaoyang District”, “Wangjing neighborhood lock core change”. There is nothing wrong with the choice of words, but there may be tens of thousands of such sites across the country, and everyone is doing the same thing. The search volume itself is so little, you squeeze in, only a few people search. On the other hand, a B2B enterprise selling industrial control software, its customers search for “PLC programming software which is strong” when the front may be three or five rivals, but each rival is a decade-old start, there are technical blogs, industry white papers, there are hundreds of real customer cases. A new station wants to squeeze in, without a year and a half of content and trust in the door have not accumulated.

The intensity of competition in your industry directly determines where your time floor is.

I've broken down the common industry competitive landscape into four buckets, each with completely different time-to-impact expectations:

- Low-competition geographic services(such as locksmith, moving, housekeeping): search volume is limited, but the opponent is also weak. Long-tail terms usually 3 to 6 months to touch the first page, but the traffic ceiling is very low, do the first month may be a few hundred visits. This is not the golden track of SEO, but its time cost is manageable.

- Medium competition B2B(For example, industrial equipment, B2B platforms, vertical SaaS): search volume is medium, and opponents have a certain amount of content accumulation. This type of track 6 to 12 months is a reasonable expectation, the first 6 months don't think about the core word, honestly with the long-tail word card.

- Highly competitive consumer electronics(Mobile phones, digital products, smart home): the search volume is huge, but the front is full of giants, brand websites, review media and e-commerce platforms. A new product wants to take the lead in this track, without 12 to 24 months of sustained investment, don't even think about it. And many categories also have seasonality - the keywords you optimized in the first half of the year may be all washed out by new product launches in the second half of the year.

- Strong regulation of healthcare finance(Hospitals, medicines, insurance and finance): This is the most special class. The industry regulation itself has strict restrictions on the content, you can't just write “XX insurance company ranked No.1”, you can't exaggerate the efficacy of treatment, and you can't make comparative advertisements. Plus the E-E-A-T (expertise, authority, credibility) review cycle.12 months is just the start, many hospitals and financial institutions have SEO programs that take two or three years to see decent results. But on the flip side, once it's done, the moat is extremely high because it takes rivals just as long to cross the same compliance thresholds.

You may ask: How can I tell which class the track I'm on belongs to?

A more precise indicator isSearch Share- - How often your brand is actively searched by target users. Some data show that in mature industries, the head brand can account for more than 60% of natural search traffic in this category. In other words, if the search volume of your brand term is a few hundred a month, it means that your recognition in the hearts of consumers has not yet established a moat, and it makes little sense to do SEO for the core term - the first thing you need to solve is the problem of brand presence, not the ranking problem.

There is another new variable in 2026 that is quietly rewriting this timeline:AI searchGoogle's AI Overview and Baidu's Smart Search are shifting from traditional “keyword matching” logic to “semantic resolution” logic. What does this mean? In the past, your ranking depended on the match between the page and the keywords; now, search engines are more interested in whether you can directly answer the user's question.

This change affects different tracks completely differently. In informational content, AI gives answers directly and users no longer click through to any page - your ranking efforts may become meaningless. But in B2B and healthcare, where in-depth decision-making is required, AI cites authoritative content as a reference, theInstead, it gives a window to overtake sites that have real thickness of content and really solve the problemThe trust that previously took three years to accumulate could be accelerated by AI “citation endorsement”. The trust that previously took three years to build up may be accelerated by the "citation endorsement" of AI.

So don't ask the question “How long does SEO take”. Ask yourself three questions first:Which track am I in? What is the volume of players in this track, and how is AI reshaping the logic of search in my industry?

Figure out these three things so that your time expectations are truly reliable. The next thing we're going to talk about is getting back to the controllable dimension - what execution density will help you pull up the curve for the same time budget.

Execution density can't change your life, but it can change your speed.

Having understood the rigid constraints of track competition, the question arises:The same low-competition medium geographic service track, why some stations in three months to see inquiries, some stations half a year is still in the same place?

I've seen too many of these comparisons. Two customers, both do industrial equipment maintenance, choose the words are similar, “air compressor repair Beijing” “screw machine maintenance manufacturers”, and even use the same CMS system. customer A weekly more a, customer B every day more a. Three months later, customer A long tail words into the top 20, customer B has been left in the form of phone. Three months later, A customer's long-tailed words into the top 20, B customers have been in the form to leave a phone. It's not luck, it's execution density in exchange for time.

The negative correlation between content frequency and time to see results is steeper than most people think.

I have tracked twenty-three B2B sites in the same period of data, control variables to the age of the domain name, industry, the initial inclusion of basically the same. Daily updated clusters, on average, within 45 days of the first long-tail words home page rankings; weekly updates, dragged to 98 days; every two weeks or even more sparse, 40% in 120 days after the first page of the word is still no. Once this gap is opened, it's hard for latecomers to catch up - the search engine's crawl quota is limited, and the more frequently you update, the harder the crawlers come, the faster new content is indexed and included, and the sooner your keyword coverage expands.

But here's a harsh truth:** Desperately piling up volume won't allow you to skip the sandbox period. **I've seen stations with ten daily posts get demoted after three months because the quality of the content couldn't hold the frequency. Execution density can not change your life - the trust accumulation cycle that should go one step will not be less - but it can change the speed. Daily a high-quality original, is a “fast breakthrough level“; a weekly, can only be called “to maintain the sense of existence“; stop more than two weeks, the crawler to come are running for nothing.

The leverage effect of technical optimization is often ignored by content teams. 2026 search environment, mobile speed insights score below 50 stations, Google will directly cut crawl quota. We have done a set of AB test: the same batch of content, station A did MIP transformation (although Google has discontinued the MIP project, but the core idea - very fast mobile page load - through the AMP or native optimization to achieve), station B to maintain the status quo. The result? New content on site A enters the indexed collection in an average of 18 hours, and site B drags it out to more than 72 hours. This 54-hour gap, in the multi-site competition for the same keywords, is the ranking of the successive watershed.

Real-time sitemap submission and structured data deployment are two other underrated time gas pedals. The habit of manually submitting sitemap is something that many teams have in the first month and forget about in the third month. But you know what? Baidu resource platform “general inclusion“ interface, the daily quota is so little, you do not take the initiative to push, the crawler discovery cycle may be delayed for ten days and a half months. Structured data, not to mention, FAQ, HowTo, Product markup once deployed correctly, your rich media summaries directly occupy the vertical space of the search results page, click rate optimization window opens in advance - this is more than simply rankings to bring traffic first.

The rhythm of outbound link building is the easiest part of the process to screw up with execution density.

I have seen too many people take the chain as a “three-month sprint“: the first two months zero chain, the third month suddenly bought two hundred. This kind of pulse operation, the search engine will know that there is a problem. External links in effect, follow the pyramid rhythm:

Bottom (as a percentage of ≤20%)The following are some examples: directory sites, forum signatures, social media profiles. These things are not expected to transfer weight, but to let the crawlers see your “traces of existence“. The first three months of the new site, lay a weekly five or six, tell Google you are not a zombie site.

Middle Level (as a percentage of 50%): industry media, news feed distribution, guest blogging. This is the core battleground for trust accumulation. A technical article reproduced by real industry media is better than a hundred forum signatures. But this type of external chain from contact to on-line, the cycle is usually 2-4 weeks, you have to layout in advance, can not wait until six months to find the weight on the not go.

Top Tier (as a percentage of 30%): Authorities, government domains, academic citations. This is the catalyst for the qualitative change period, usually after 6 months before starting to put. Early pursuit of this, the resources scattered, and search engines may suspect that your “new station“ where these endorsements.

The input ration of this pyramid has to be appropriate to your stage. The first three months down to earth to do the bottom + middle, do not crave the top. I have seen a do legal services station, the fourth month on the money to engage in .edu links, the results of the rankings do not move - not because the link is useless, because of the trust accumulated data observation period has not gone through, weight transfer is diluted.

Execution density is, in the end, a form of time arbitrage. The more time you invest per unit, the more dense the signal that can be observed by the search engine per unit of time, the faster the feedback loop. But this arbitrage has an upper limit: the quality of the content, technical debt cleanup, the naturalness of the chain, any of these three lines collapsed, the higher the density of the death of the faster.

So if you're in your first trimester right now, here's my advice:Make it a mandatory goal to have an original article a day, while setting aside half a day a week for a technical auditThe first thing you need to do is to find out what is going on. Don't wait until six months to realize that the original mobile content is missing leading to indexing lag, the original JS rendering so that the crawler sees the page and the user to see is not the same - these technical debt, early discovery of a week, may save a month of waiting.

By three to six months, the execution density has to shift gears. The frequency of content can be reduced to 2-3 articles per week, but the depth of each article should be improved - start targeting the middle level of competition words, and begin to layout a series of topics. External link construction from the “volume“ to “deep plowing“, to find 3-5 industry media to establish a long-term supply relationship, more effective than casting a net around.

After six months, the density is adjusted again. By this time your domain weight has passed a threshold and the cycle of indexing new content may be reduced to a few hours. Here's what you need to doContent Matrix Replication at Scale--Take verified content templates and quickly overlay them to new clusters of long-tail terms. At the same time, authoritative outbound links at the top of the pyramid can be activated to raise your trust ceiling another level.

There are no shortcuts on this road, but there are variable speed gears. Pedal at the right pace and you can go a different distance in the same amount of time.

And stepping in the right rhythm presupposes--You need to know exactly what gear you're in now and what the numbers are on the dashboard. A lot of people panic, not because they're really slow, but because they can't see the signal and don't know if they're climbing a hill or if they're already skidding on a flat road.

What sign are you waiting for from 0 to 12 months?

You're already running and picking up speed. What you need most now is a dashboard - not to guess, but to look at the data and determine exactly what stage you are at.

0-3 months: Foundation period

There are just three acceptance criteria for this phase.

First, indexing growth. After your sitemap submission, Google Search Console in the “indexed pages“ number should rise steadily. New station from 0 to 100 indexed pages is a can, across the past, that the crawlers are willing to come to you to live here. I have seen too many people to the second month is still staring at the rankings, the results of a check of the index, only three dozen pages - the content is confiscated full, rankings have no foundation.

Secondly, long-tail words into the top 20. this is more important than the index volume. google collected your page, does not mean that it will be given to people to see. Long-tailed words because the competition is small, even in the sandbox period, can often touch the edge of the top 20. “Beijing through the toilet phone” “Shanghai used equipment recycling” this kind of word, if you do the layout, three months is not in the top 20, the problem is in the quality of the content or technical capture, not enough time.

Third, technical audit clearance. As I said in the previous chapter, the technical debt for mobile load speed, JS rendering, and URL normalization must be cleared early on. At the end of three months, you should not see any serious errors in the GSC, and Core Web Vitals should get at least a “good“. If there are still red performance warnings, don't rush to pitch content, fill in the technical holes first.

3-6 months: weighting period

This stage is a real watershed. The first six months don't produce results, and this is where many people give up.

Core indicators: the core words into the first 3 pages. Note that I said “the first 3 pages“, not the home page. Can be in the first 3 pages, that the search engine has recognized the relevance of your topic, the next is the problem of time. If three months when the core word is still in the 4th page after, to check two things - either the word is too difficult to choose, or the external chain trust did not keep up.

Long tail traffic accounts for 40% or more. This is an empirical value. The traffic share of long-tail terms represents whether your coverage is expanding. If long-tail terms only account for 20% of traffic, it means you're still eating your old food, and no new content is taking on new search demand.40% means your content matrix is working, and new pages are consistently contributing traffic.

The click-through rate optimization window opens. Structured data is deployed right, and at this stage you'll start to see rich media summaries in search results - FAQs, ratings, price ranges. The same article with a star rating has a higher click-through rate than the one without a star rating.23%-35% This window typically opens at 4-5 months, too early means the industry competition is too weak, too late means your structured data isn't deployed right.

6-12 months: quality change period

If you stepped on it in the first two phases, the change will be noticeable this round.

Home page keyword percentage. This means that you have how many target keywords into the first page. 30% is a can - reached, that your domain weight began to break through the critical point, into the “ranking acceleration“ state. I have seen a storage equipment station, the eighth month home page keywords jumped from 3 to 17, is this effect.

Brand word searches have jumped. Users used to search for “air compressor repair“ to find you, and now people are starting to search directly for “XX company air compressor repair“. The growth of brand term search volume shows that users have changed from “looking for content“ to “looking for you“, which is the first signal that SEO brings brand premium.

The inquiry conversion closed loop is formed. At this stage, the traffic should be able to actually convert into inquiries. If the traffic went up but the form is not more, either the page experience has a problem (slow loading, difficult to fill out the form), or the search intent match is a problem - you lead to the user is not running to the inquiry.

12 months later: the moat period

After this hurdle, SEO from the “investment period“ into the “harvest period“.

AI Search Adaptation. Google's AI Overview and Baidu's Smart Search are continuing to rewrite the rules of ranking. If your content can be directly quoted by AI and generate answer summaries, you don't have to simply grab rankings anymore - you're directly in the answers users want. This is not an opportunity for all industries, but at this stage, you should start testing brand exposure in AI search.

Content matrix refinement. Your coverage of long-tail terms should be dense enough Next is to check for gaps and fill in the search intent that is not yet covered, and at the same time, make the high-converting content page into a topic to form a closed loop of internal traffic.

Competitors have elevated entry costs. This one is ignored by many. When your domain name weight is high enough, content thickness is large enough, new players want to catch up, pay the cost of time is 2-3 times your. His content thickness to catch up with you, external chain trust to re-accumulation, the search engine on his trust observation period will not be less. This is the SEO real moat.

Now, back to your current situation. Do you know what stage you are in? Check each stage against the list above. If you get a signal, continue; if you don't, find out why. Don't give up before the dawn, and don't run blindfolded at a dead end.

before you give up in the middle of something

I've seen too many clients that message me at month four, “Three months in and the core business terms are still on page two, are they going in the wrong direction?”

These moments are the most dangerous. You've run through the sandbox period, indexing is going up, long-tail terms are in the top 20, or even the top 10 - but the core terms are stuck in the 11th to 20th place, the traffic is clearly crawling, and the inquiries haven't come in yet. The easiest mistake to make at this time is to misjudge the situation.

Normal vs Danger Signs

First draw a line. Three months zero ranking, if you mean “core words did not enter the first page“, too normal. Google's algorithm observation period is not over, your domain weight is accumulating, but not yet reached the tipping point.

But if three months go by and this is the picture in your GSC - indexing stops below 50, technical reporting is still red, and long-tail searches are only single-digit hits - that's a real red light. This means that the crawlers either don't come, or if they do, they can't read your pages, and the search engines simply haven't put you in the candidate pool.

I have seen a do industrial equipment station, the boss every day staring at the “CNC cutting machine“ this word ranking, three months is still on page 4. A check of the GSC, the index volume has been 380, “used CNC cutting machine transfer” “small CNC cutting machine price” these long-tail words every day to bring more than ten points of accurate traffic. He just did not realize that he has passed the basic period, just waiting for the weight period of qualitative change. Half a year later, the core words into the first page, the flow of five times.

The criterion is simple: look at the trend, not the absolute position.

Switch service providers, or wait a little longer?

The point at which you really need to consider changing your strategy is not when “the effect is not as expected“, but when “the signal is completely missing“.

The previously mentioned milestones - index growth curve, long-tail ranking distribution, technical audit clearance - are only considered to be in the “redo“ zone if there is no positive movement for two consecutive months. "interval. Note that is two consecutive months, not a week fluctuations. I've seen too many people because of a Google algorithm update dropped a few words, on the panic to change the external chain strategy, the results of the newly established chain of trust broken.

There is also a case of misdirection: your core words are chosen as a red sea battleground, the KD value is 60 or more, the opponents DA are in 70+, and your budget is only two pieces of content per week. This kind of mismatch, three months is enough to expose, and the more you insist, the more you lose. This time is not as simple as changing the service provider, it is the whole keyword strategy to overturn and start over.

But more often than not, there just isn't enough patience. I've seen a B2B site that was still on page 2 for core terms in month six and the owner wanted to give up. We persuaded him to run another quarter, the top of the pyramid of external links to make up - two industry vertical media guest posts. In the eighth month, three core words into the first page at the same time, the flow from 200 per month jumped to 1800. those two months of foreign chain investment, is the first eight months of the total cost of three times, but less than this “ballast“, in front of the time all for nothing.

| Signal characteristics | core judgement | Recommendations for action |

|---|---|---|

| Index growth curve continues to rise, long-tail terms in top 20, technical audit cleared | Normal track, patiently waiting for the weighted period to qualify | Continue to implement the current strategy, focusing on replenishing the top ballast of the external link pyramid |

| Three months the core words did not enter the first page, but the GSC shows that the index volume of 380 +, accurate long-tail words to bring the day more than ten clicks! | Past the base period, misjudging the situation | Stay invested and wait for a breakthrough after 6-8 months of outbound link trust accumulation |

| Two consecutive months of no positive change: stagnant indexing, no rankings for long-tail terms, technical reports not cleared | Signal completely missing, entering redo interval | Changing service providers or reversing keyword strategies after a full audit |

| 60+ KDs for core words, 70+ opponent DAs, and a budget that only supports two pieces of content per week | Directional mismatch, the more you hold on, the more you lose | Immediately pause and reassess keyword difficulty and resource matching |

| Panic to change outbound linking strategy due to single algorithm update dropping rankings | Chain of trust broken, patience running low | Observe at least one full cycle before making a decision to avoid frequent strategy switching |

| Three months zero rankings and GSC shows indexing below 50, technical reporting red, single digit clicks for long tail terms | Crawlers not indexed or unable to understand the page, real red light | Prioritize solving technical crawling and indexing issues over appending content |

| Use site group external links / keyword stacking / fast ranking software, rankings rise quickly in 45 days | Time illusion. The countdown to punishment has begun. | Immediately stop the black hat operation, clean up the external links to prepare for the complaint, expected 2 years recovery cycle |

| Relying on PBN network/old domain 301/bulk pseudo, no triggered penalties for many months | Gray Hat Delayed Thunderstorm, Algorithm Updates High Risk | Gradually replace the link with a white hat link before it explodes to reduce the future shock surface |

The Real Timeline of Black Hat Shortcuts

This is the most damning illusion of all.

Some people tell you that the three-month package home page, using the site group of external links, keyword stacking, hidden text. These means are not without effect - the first 45 days effect may be faster than you do SEO properly. But the timetable for punishment is fixed: Google's algorithm audit cycle is about 90 to 120 days, and Baidu's manual complaint review is even slower.

I have seen a station for education, using fast ranking software to brush the word “study abroad agency“, two months to the 3rd place. In the fourth month, the whole station disappeared from the index, even brand words can not be searched. How long did it take to recover? Two years. Not to be alarmist, from filing a complaint, cleaning up external links, rebuilding trust in content, to being indexed normally again, 22 months. The loss of cash flow in those two years is enough to break up a small team.

More hidden is the “gray hat“ operation: buy old domain names to do 301 jump, bulk collection of content to do pseudo-original, PBN external link network. These will not immediately trigger the punishment, but will be in the algorithm update batch mine. 2024 March Google's core update, a large number of sites relying on PBN fell out of the top 100 overnight, the recovery cycle is still unknown.

The real cost of black hat is not the penalty itself, it's the closing of the window of opportunity. While you're struggling to survive after being penalized, competitors are legitimately accumulating domain weight and blocking your room to roll over a little bit.

The pre-dawn signal.

Back to where you are now. If indexing is climbing, long tail terms are coming in, and the technical base is clean - you're on track. Core word breakthroughs require outbound link trust to build up, and there's no rushing this part of the process.

But if your three months are a whirlwind of technical reporting errors, of content being sent out and no one receiving it, of being wrong at the word choice stage - then it really is time to stop and reassess. It's not giving up on SEO, it's giving up on the wrong approach.

The next variable is looming: the rise of AI search could rewrite the rules of “trust accumulation“ and create new uncertainty. Whether or not your content can be directly referenced by AI, and whether or not it will skip the traditional ranking game, will determine who benefits from the new rules over the next 12 to 18 months.

But before that, make sure you're not wasting fuel on the wrong track.

Is AI search rewriting the timeline?

I've seen too many clients who, when debating whether to give up in the last chapter, were worried about the traditional search engine ranking rules. But now, new variables have come in - Google's AI overview, Baidu's Smart Search - that are fundamentally redefining what it means to be a “search result.

In the old days, ranking was a math problem of keyword density, number of outgoing links and page authority. The answer was on the page, and whoever had the best keyword match and quality links won.

Now what?AI search is turning that question into reading comprehension. It's not content to give you a page of links to documents containing keywords, it's going to give you a direct summary of the answers.

What does that mean?

The traditional “keyword matching → link voting → authoritative ranking” weight accumulation chain is being diluted by the new algorithm of “semantic resolution → content integrity → user experience signal”.

You write an article about “how to reduce the business website bounce rate”, before is to try to make the title, H1 tags, the first two paragraphs contain this phrase, and then find a few “website construction” site for you to link back. Now, Google's AI will directly “read” your article. It will judge:

- Is your solution as superficial as “add a popup to get users to subscribe”, or have you analyzed the root causes of page speed, first screen load, and content fit?

- Did you make up your case data off the top of your head “boost 50%” or did you include a Google Analytics screenshot of a real website and a time comparison?

- Is the structure of your article a patchwork, or does it logically and clearly give step-by-step diagnostic tools, solutions, and guidelines for avoiding pitfalls?

If the AI determines that your content is of high quality and the solution is complete, it may grab a few core passages directly from your article and put them into its “AI overview”, which is presented to the user as part of the standard answer. Users don't even have to click on your website to get the key information.

This sounds a bit scary - is the traffic being cut off? But put another way.Your high-quality content receives an unparalleled “endorsement of trust” and brand exposure. Your brand name, your core point, will appear at the top of tens of thousands of search results as an officially recognized answer. The weight of such authority signals can far outweigh dozens of ordinary outbound links.

In order to cope with this change, you need to move some of the energy you used to spend on figuring out “which anchor text link is more effective” to the depth and structure of your content. One clear trend is that the weight of user behavioral signals is rising rapidly.

Length of stay, depth of page views, secondary referencing and sharing of content - these data are becoming the core fuel for AI to determine the value of content.

I have a 2025 case, a project management SaaS blog, wrote a nearly 10,000-word “Remote Team Project Management Full Process Guide”. This article did not intentionally pile up any difficult core words, is to honestly talk about the steps, give templates, analyze the advantages and disadvantages of tools. Three months after the release, the ranking of the core keyword “remote project management” has been hovering on page 2, which is average by traditional standards.

But GSC's background data is very interesting: the average length of stay of this article is 11 minutes and a half, the bounce rate is only 22%, and more importantly, there are a large number of traffic sources shown as direct references to the “Google AI Overview” and “answer cards ” displays. By the sixth month, the article had generated an increase of 3,001 TP6T in searches for brand terms, and more than half of the conversion inquiries would say “I saw your guide when I was searching for answers in Google”.

Look.The ranking may not be on the first page, but the resolution of “search intent” and the establishment of “user trust” are directly accelerated by AI search. It skips the long process where you have to crawl to the home page, get a click, and then verify the length of stay, and pushes your content directly to the user's eyes with its judgment.

What does this mean for the timeline?

First, for high-quality content that is well-targeted and addresses in-depth issues, the “first phase” of effectiveness may be shortened. You don't need to wait for your link weight to build up to push your rankings to the first page, the AI may cite you directly in the “AI Overview” or “Answer Snippet” of a relevant query shortly after indexing. This brings brand exposure and early trust accumulation, is the traditional ranking game can not give.

Second, but the penalties will come faster and more thoroughly for superficial, stacked, and homogenized content. If AI judges that your content is just information handling, no incremental value, it may not even give the opportunity to display. This means that the window of time for the past strategy of mass production of low-quality content “to exchange volume for ranking” is closing dramatically.

Third, the dimensions of competition have changed. In the past, you and your competitors than who has more links on the home page, now than, when the AI to your article and his article are “read”, whose content is more worthy of being recommended as a standard answer. This is the depth of content, professionalism and user experience of the ultimate competition.

So, back to your “not ranking in 3 months” anxiety. You need to revisit your “results dashboard”:

- In addition to keyword rankings, it's important to look at the “number of times results are displayed” under “Search Appearance” in the GSC - are your pages appearing in more varied search result formats (eg. Answer snippets, related Q&A)?

- In addition to looking at the number of clicks, it is important to analyze the page's “average dwell time” and “bounce rate”. AI is watching to see if users are actually finding the answers on your page.

- With your content, are you listing known information, or are you providing solutions with data, case studies, and exclusive insights?

AI search is not invalidating SEO, it is raising the bar and rewards of SEO. It shifts the center of gravity of the game of time from “accumulating external link weight” to “proving the value of content”. For websites that are willing to put their minds to making high-quality content, this could be a new window of opportunity to skip the long weight accumulation period and build trust quickly.

But opportunities only come to those who are prepared. If your content is still at the 2020 level of keyword stuffing, the AI era will give you, not a shorter timeline, but an earlier notice of elimination.

What to budget your time for

Now it's time to take all the variables we've talked about these past nine months and collapse them into a formula you can take away with you. It's not metaphysics, it's the product of three variables: site base × keyword strategy × industry competitive intensity.

I've seen too many people fall apart at this part - not because they miscalculated, they never did.

Score the site base first. New domain, zero links, technical audit with a bunch of errors? The base score is 0.6. Small accumulation, index volume in the triple digits, have not been punished? The old site is reborn but the history is clean, industry-related? Note that if the old site has been penalized for buying black chains, the first 20% discount, the recovery period should be added 3-4 months.

The coefficient of keyword strategy is more hidden. All bets on high-competition core words (such as “SEO services“), counting 1.5 times the time; long-tail words (such as “Beijing B2B website SEO optimization“), the pressure to 0.7; mixed strategy and intent to match the precise, take 1.0. There is a trap: the tool The KD value (Keyword Difficulty) displayed tends to underestimate the actual time cost. I have seen KD 35 words, because of the complexity of the search intent, content supply oversupply, the actual hit than the KD 60 vertical long-tail two months slower.

Industry competitive intensity is directly multiplied by a factor. 0.8 for local life services, 1.0 for moderately competitive B2B, 1.5 for consumer electronics or healthcare, and an additional 1.2 for strongly regulated tracks. new variable in 2026: if your track is already heavily covered by AI overviews (e.g., health sciences, software tutorials), and competition for quality of content is intensifying, multiply by an additional 1.2.

Individual Time Expectation Formula = Base Period (6 months) × Site Base Factor × Keyword Strategy Factor × Industry Competition Factor

For example: the new station (0.6) to do a mixture of keywords (1.0) into the medium competition B2B (1.0), is expected to enter the weight period of 3.6 months, 6 months or so to see the qualitative change. The same is a new station, if the hard knock “enterprise mailbox“ this red sea words plus medical industry background, the cycle may be pulled to 14 months.

After counting expectations, you need a monthly checklist to calibrate reality. Don't torture yourself by staring at ranking fluctuations, look at these three core GSC metrics:

index volume: Is the monthly ring growing 20% or more? From 0 to 100+ in the first three months of a new site is the passing line, and stagnation is checked for technical audits or content quality.

Change in the number of ranking keywords: Focus on monitoring the pool of long-tail terms in the 11-20 position. This range is the easiest to break through to the first page and is fuel for the weight period. Adding more than 10 new ranking keywords per month indicates that the strategy is in effect.

CTR Optimization Opportunities: Exposure goes up but clicks don't, change title and description immediately. Do rich media summaries appear after structured data deployment? This is a clear signal of a 3-6 month weighting period.

- Calculate site base coefficient (0.6 for new domains / 1.0 for accumulated sites / 1.3 for old sites reborn, 20% discount for history of black chains first)

- Evaluate keyword strategy coefficients (1.5 for highly competitive core terms / 0.7 for long-tail terms / 1.0 for mixed strategies, watch out for KD underestimation traps)

- Approved industry competition coefficient (0.8 for local life / 1.0 for medium B2B / 1.5 for consumer electronics and healthcare / multiply by another 1.2 for strongly regulated tracks, and an additional 1.2 for AI-covered tracks)

- Calculate individual time expectation formula: benchmark cycle 6 months x site base x keyword strategy x industry competition

- Check whether the GSC index volume monthly growth of 20% or more, the first three months of the new station 0 to 100 + for the passing line

- Monitor changes in the pool of 11-20 long-tail terms, and add more than 10 new ranking keywords per month to signal the strategy is in effect.

- Analyzing CTR Optimization Opportunities: Changing Titles and Descriptions Immediately When Exposure Goes Up but Clicks Don't

- Confirmation of the presence of rich media summaries after the deployment of structured data as a signal for a 3-6 month weighting period

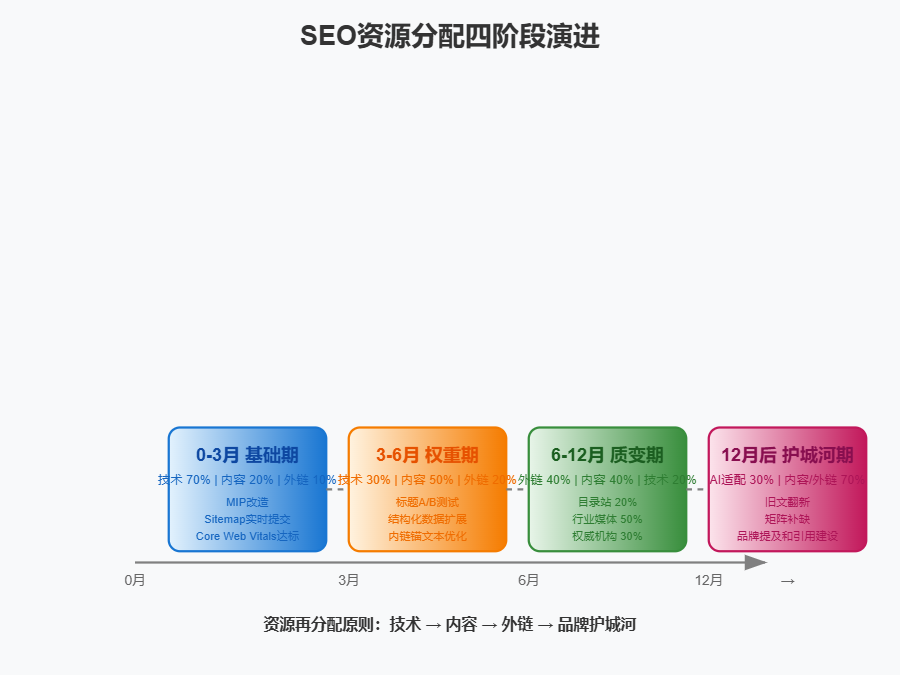

- Allocation of resources by stage: 0-March technology 70% content 20% outreach 10%, March-June content 50% technology 30% outreach 20%, June-December outreach 40% content 40% technology 20%, after December AI adapted 30% + refurbishment of old articles + branding mentions Construction

The principle of resource redistribution determines whether you accelerate or idle.

0-3 months foundation periodThe first time I saw this is when I was in the middle of the night, and I was in the middle of the night, and I was in the middle of the night, and I was in the middle of the night, and I was in the middle of the night, and I was in the middle of the night. Technical debt is not cleaned up, behind all for nothing.MIP transformation, sitemap real-time submission, Core Web Vitals up to standard, these three things are not done don't think about adding content.

3-6 months weighting period: Content share mentioned 50%, technology down to 30%, external links start 20%. this is the window of click-through rate optimization, title A/B testing, structured data expansion, internal link anchor text optimization, the return is immediate.

6-12 monthsThe following are some of the most important things you can do for your business: build external links to 40%, content to 40%, and technical maintenance to 20%. Pyramid structure: bottom directory stations are controlled to 20%, middle industry media to 50%, and top authoritative organizations to 30%. Don't waste your budget at the bottom, a .gov or .edu anchor text link may be more useful than fifty forum signatures.

Moat period after 12 months: AI Search Adaptation Testing accounts for 30% of the added energy, with content including old text refurbishment and matrix gap filling, and outbound links shifting to brand mentions and citation building. At this time the domain name weight has become a barrier, the entry cost of competitors is raised by you.

The last word, and the bricks and mortar of the next chapter: the essence of the SEO timeline is the accumulation of trust; you can't fool the algorithm, but you can align its rhythm. Count your variables, keep an eye on your signals, and do the right density of things at the right stage - patience is not passive waiting, it's the discipline of proactively managing expectations.