Does every article you generate look the same?

You stare at the list of articles on the screen, and in your heart, you make a mutter: these articles, how come the more you look at them, the more they look like each other?

The titles are similar in structure, the first two paragraphs are all about trends and matching data, the third paragraph starts to break down the points, and the last paragraph comes to summarize. When you read the first one, you feel quite professional, and when you read the fifth one, you can even guess what will be written in the next sentence.

This is not your illusion. In fact, according to third-party research data, the content duplication rate of current content platforms has reached an exaggerated point - the topics are highly similar, the viewpoints are more or less the same, and the way of expression seems to be carved out of a mold. Users brush to the third article and begin to scratch away, stay time is not a little less than before.

All that time you saved with AI writing tools may now cost more to get back. Because when all sites are writing content in AI, and everyone is using it in pretty much the same way, your site becomes the most inconspicuous piece of that poster.

Readers won't remember a site that “sounds right, but just doesn't have a lot of character”.

But I'm not writing this article to sell anxiety. Read down, you will find that anxiety itself can not solve any problem, the real useful is a set of judgment criteria and action methods can be landed.

I will help you figure out whether your site has fallen into the pit of content homogenization or not, and how deep the degree is. Then give three directions of the solution: how to use the depth of knowledge in the vertical field to build competitive barriers, how to train your own style model, how to correct the posture of human-machine collaboration. This is not a theory, it is all practical methodology, and at the end there are real cases and tools recommended.

In other words, after reading this, you'll at least know where to start your hands next.

Let's take a look at just how AI is muddying the waters of content creation.

AI is rewriting the rules of WordPress site creation

Now you know the problem and you feel the anxiety. But anxiety will only keep you in place, and seeing through the situation itself is already the first step to solving it.

We have to recognize a basic fact: the use of AI tools to generate articles in bulk has gone from an amazing black technology to the “new normal” of content creation for WordPress sites.

This change has happened in a very short period of time. Just a few years ago, when tools like Jasper and Writesonic appeared, we were in the mindset of trying them out, sampling them, and generating a beginning or two and marveling at them. But now, things are completely different. These tools can quickly produce entire articles, product copy, social media posts, and even automatically adapt to different publishing platforms. For site operators who need a lot of content every day, it's like an open productivity plug-in.

Efficiency gains are overwhelming, there's no doubt about that. But what is the cost behind the efficiency gains?

This is about the current state of the technology, AI, especially the current mainstream generative model, it is in the “write a good article” this matter, there is a clear ability to boundary. It is best at “text prediction” based on patterns trained on massive amounts of data. That is to say, given a prompt, it will find the “most likely” words and sentence structures from the training data to combine into a text.

This brings us to the core problem: it lacks the “narrative flow” and “creativity” that is essential in human writing. It doesn't really understand the unique context of your industry, the decision-making pressures behind a success story, or the subtle tone of voice you use when communicating with users. What it generates is the “average optimal solution” from the training data - the kind of content that is the most stable, the least error-prone, but also the most characterless. What it writes, you can think of it as a standard cup of instant coffee, can refresh and quench your thirst, but absolutely no flavor of the origin of the beans, and no barista's unique brewing techniques.

Therefore, you will see a lot of AI-generated content, on the surface of the framework is complete, smooth statements, but read always feel a layer, like listening to a permanent emotional smooth AI assistant to do the report. It does not have the emotional advancement of the beginning and end, there is no unique and sharp point of view, not to mention the “oh, this is only experienced people can write the details”.

This echoes the feeling we mentioned in the previous chapter - “the articles you generate all look alike.”

So is the human creator “out of the picture”? On the contrary, the role must change.

In the past, you may have been a hands-on “typist” or “copywriter”. Now, you need to be an “Editor-in-Chief” or “Creative Director”. Your core value is no longer to spend your time on the physical labor of crafting sentences, but toDefining direction, infusing soul, and making critical judgments。

You need to tell the AI:

- What is the unique perspective of this article?

- Where do we get the core data we want to cite?

- What is real, detailed customer feedback in this case?

- What emotion or call to action is this essay trying to convey to the reader at the end?

Leave the repetitive tasks of database searches, basic framework building, and language touch-ups to the AI. you, on the other hand, focus on what the AI can't do: strategy planning, information authenticity screening, the incorporation of personal experience and insight, and ultimately, stamping the content with your own personal style.

Human oversight is not only indispensable, it is becoming even more important. Because once there is a lack of in-depth human intervention, the content of a website will quickly slide into the sea of “average optimal solutions” mentioned at the beginning, and ultimately lose its recognizability and the “soul” that establishes a real connection with the readers.

In other words, the rules of creativity have been rewritten. The core of the rules changed from “who writes” to “who decides and how to write collaboratively with smarter tools.”

So if you feel like your website content is starting to become a blur, don't give up on AI just yet. the problem may not be with the tool itself, but with whether you understand and adapt to the changed set of rules, and whether you're still using a tool that's evolved as you used to.

Three Real Reasons Why Content Becomes Samey

By now you're well aware that AI is profoundly changing the game of WordPress content creation. At the heart of this new set of rules is a huge increase in efficiency, but at the cost of your content starting to become faceless - it reads all in the same accent, and you get tired of reading it too much.

But after understanding the “what”, you must ask a more fundamental question: why did it come to this?

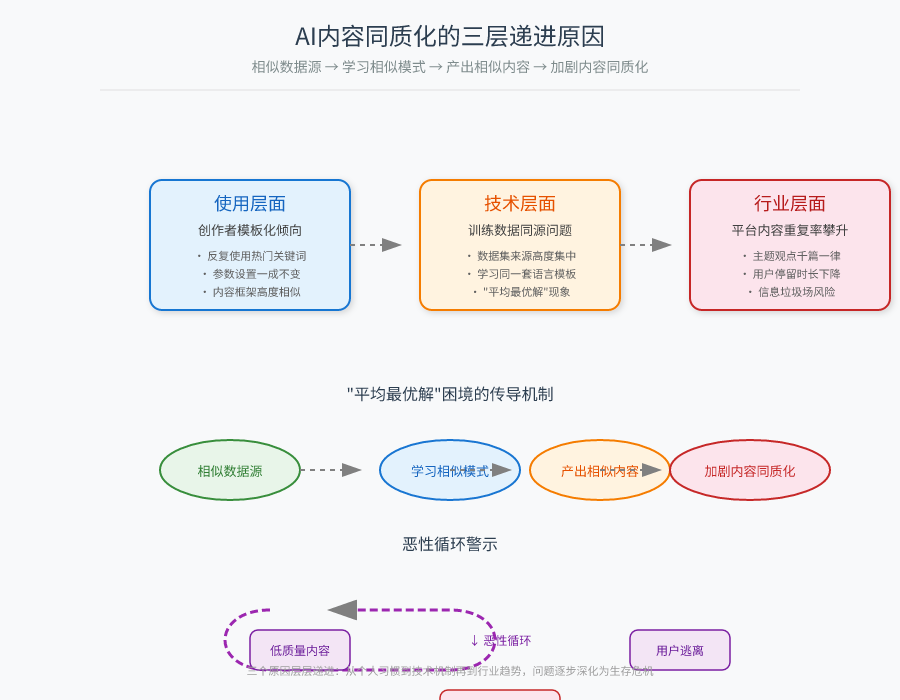

Content homogenization is not the failure of a particular person, but the inevitable result of the force of the three levels together. These three reasons are not clear, the back to give you more solutions are treating the symptoms but not the root cause.

The first reason is at the level of usage: the usage habits of most creators inherently carry a heavy tendency towards templating.

It's quite understandable. When you open an AI writing tool, what is your first reaction? Do you type in what you really want to say, or do you habitually give it a few so-called “hot” keywords first?

Questionnaire research data confirms this. More than 70% of AI tool users will repeatedly choose similar thematic directions when using the tool several times - the words “popular attractions”, “food recommendations” and “travel tips” appear very frequently. Travel Tips“ appear very frequently. At the same time, almost no one adjusts the parameters of the AI every time. What most people do is: set the parameters once and keep using them. This leads to a direct consequence - you use AI to generate content, the underlying framework is exactly the same. The structure of the title is similar, the logic of the paragraph is similar, and even the tone of the first sentence is similar.AI is not incapable of writing diversified content, but you, as a user, have not given it a sufficiently diversified ”starting point".

The second reason is at the technical level: there is a serious homology problem with large model training data.

You may not have thought about a deeper question: these mainstream AI writing tools on the market, their “brain” is how to “feed” out?

The answer is: they eat almost the same pot of food.

Mainstream AI models are trained on a highly centralized set of datasets - news reports, public academic papers, and social media statements, which account for more than 80% of the data. This means that different brands of AI tools are actually learning the same set of “language templates”. What they learn are the expressions that appear most frequently in the training data, and those sentences that are the most stable, the least likely to make mistakes, and the most in line with “popular expectations”.

That's why I keep emphasizing the phenomenon of the “average optimal solution”; AI is no dumber than you are, it's just a machine that doesn't have a preference and chooses the safest answer. If you ask it to write an article about a certain topic, it will instinctively choose the most popular perspective, the most common data, and the safest conclusion. It's not that it doesn't want to innovate, it's that the training data itself tells it that innovation is risky and average is safe.

The third reason is at the industry level: platform content duplication continues to climb, and users have already voted with their feet.

If the first two reasons explain “why content is homogenized”, then the industry-level data tell you how serious this matter has become.

Third-party research shows that the current content duplication rate on mainstream content platforms has reached a critical mass. The so-called repetition rate is not just literal text repetition, but refers to highly similar topics, the same viewpoints, and much the same way of expression. At the same time, the average length of stay of users is continuing to decline. Users are not stupid, when they find that open eight of ten articles say the same thing, the same set of words, slip away just seconds.

What does this trend mean for WordPress sites? It means that if you continue to use the current “AI-generated-batch publishing” model, your site is becoming a dumping ground for information that users are eager to escape.

What's more worrying is that the deterioration of competition triggered by homogenized content is a vicious cycle. Creators find that no one reads the content, so they pursue the frequency of publication more frantically, sacrificing quality to grab traffic. The more low-quality content there is on the platform, the harder it is for truly valuable content to be seen, and in the end no one is the winner.

These three reasons are progressive: the templating of usage habits makes AI-generated content lack of diversity, homogenous training at the technical level makes “average-optimal” inevitable, and the deterioration of data at the industry level turns the problem from “optional” to “existential crisis”. The deterioration of data at the industry level has turned the problem from "optional" to "existential crisis".

The problem is clear enough. If you're a site operator, there's only one question on your mind right now: what to do?

This is exactly the kind of thing that will be addressed in later chapters.

Vertical depth of knowledge is the king of competitive differentiation

Understanding the three root causes of content homogenization, your first thought may be: how do I get out of this mess then?

Directly stop using AI tools, back to pure manual writing, which is obviously unrealistic - you have tasted the sweetness of efficiency, no one is willing to go back. The real way out is not “to use or not to use”, but “how to use”. Specifically, you have to give your AI to provide enough unique, deep enough “nutrients”, so that it is processed on the basis of the content, from the root is different from others.

This leads to the first core strategy of the solution:Deep Knowledge Integration in Verticals.

Don't let the term scare you. Simply put, it means taking expertise and data in your field that no one else has, or even non-public, and organizing it into a structured knowledge base that can be understood by the AI. When AI learns and generates content in this “private library”, its output will naturally have barriers and recognition.

Why does this work? Think back to the “technical root cause” mentioned in the previous chapter: the homogenization of data for training mainstream AI models. Your competitors are using AI, probably using the same software or the same type of software, and feeding on information that is publicly available on the Internet and accessible to everyone. You are at the same starting line and reviewing with the same set of questions. The only way to stand out is to have an exclusive, secret “review kit” in your hands.

This “private library” is yours.Vertical Domain Knowledge Graph. It is not a nebulous concept, but a set of executable and actionable building processes.

Step 1: Determine the scope.

Don't be greedy. Don't try to cover the entire industry right off the bat. Narrow it down to a niche that you are absolutely confident in. For example, if you're doing a WordPress B2B site for foreign trade, don't think “cross-border e-commerce”, but rather at a granularity of “small online fastener wholesale trends in North America in 2026”.

Step 2: Data collection.

This is the most time-consuming, but also the most central step. You need to gather information on the “deep waters” of this niche under the carpet. This includes:

- Proprietary data: Your own client case studies, deal data, industry reports (purchased or internally generated).

- Transcripts of expert interviews: Interviews with front-line practitioners, technical experts, and organizing audio recordings or notes into text.

- Private Community Discussions: High-quality conversations, Q&As, and clashes of ideas in your industry community.

- First-hand documentation in foreign languages: Foreign forums, specialized blogs, and white papers that your peers are less likely to visit.

The process itself poses barriers - most people don't have the patience to do it or the ability to access these resources.

Step 3: Entity identification and relationship extraction.

In layman's terms, that is, you collect a huge amount of text information, organized into a “knowledge map”. For example, in the field of “fasteners”, the entity is “stainless steel bolts”, “ASTM specification”, “trade compliance” and other specific concepts. “and other specific concepts; relationship is” stainless steel bolts for - outdoor environment “,” ASTM A193 - "specified bolt material standards. ASTM A193 - sets the standard for bolt materials". You can do some preliminary text analysis with some basic tools (e.g. Python's NLTK library), but the key relationships and logic need to be defined and calibrated by you, the domain expert.

Step 4: Knowledge integration and construction.

Connect the “dots” (entities) and “lines” (relationships) identified in the previous step to form a mesh. Imagine that you are creating a huge mind map, where each node is rich, accurate, and interconnected. This process is now aided by open source tools such as Neo4j, but the hardest part is still the quality of the content itself.

Step 5: Continuous updates.

The Knowledge Graph is not a one-and-done database. Your industry evolves, and new technologies, policies, and market reactions emerge all the time. You need to establish a mechanism to regularly “feed” the graph with newly acquired knowledge to keep it alive.

Does this method sound complicated? It's true that it's more work than just typing in a few keywords. But let's look at a real case.

A MedTech company's WordPress blog was and is stuck in a content homogenization rut. Their AI-generated cardiovascular health science articles were highly similar to the content of other health platforms online, with mediocre traffic. Later, they changed their strategy and brought in two working cardiologists as content consultants.

The partnership model is simple: doctors provide the latest clinical observations, unpublished case studies, and common patient misconceptions. The company takes this in-depth first-hand information and organizes it into a structured “cardiovascular disease intervention knowledge base” along with relevant medical literature and data reports. Then, they use AI tools (e.g.Duck & Pear AI Writing(Such systems that support deep customization of input) are based on this knowledge base to generate first drafts.

The result? In the articles they produced, there appeared “the actual clinical consideration of individualized adjustment of drug dosage”, which is seldom mentioned in the industry, and analyzed “the long-term tracking data of the same stent under different degrees of vascular tortuosity”. These contents, which are almost impossible to find in the public data on the Internet, are precisely the “dry goods” that professional readers (other doctors, pharmaceutical representatives, and highly cognizant patients) are most eager to obtain. In less than six months, the blog became a recognized source of authoritative information in the vertical, and the length of time users stayed on the blog and the sharing rate multiplied several times.

This case reveals the true power of vertical knowledge convergence:It allows AI to get rid of the constraints of “average optimal solution” and has the ability to produce “professional optimal solution”. When AI's “brain” is filled with your exclusive experience, in-depth data and professional insights, the content it generates is differentiated from the start. This difference is not a small repair on the style of writing, but the value of the information level of the intergenerational crushing.

The Knowledge Graph is your customized “professional brain” for AI. It ensures depth, accuracy, and cutting-edge content that can never be achieved by simply tweaking the parameters of your writing style. Your website is no longer a content distribution site, but a knowledge node that consistently produces industry insights.

Of course, building a vertical domain knowledge graph is a systematic project that may not apply to everyone. If that sounds too heavy-handed to you, or if your domain is more colored by personal experience, there's another way to go - let the AI learn your personal style and way of thinking directly.

Train your own style model and let the AI write your flavor!

In the last chapter we found the path to professional depth: building technical barriers to your site's content with a vertical domain knowledge graph. But if you're an individual webmaster with a not-so-deep domain knowledge base, or even doing pan-life blogging yourself, this path sounds a bit harsh.

Don't worry, professional depth doesn't work, we have another dimension to break out:attitude。

Yes, style. Sounds very abstract, but it is this abstraction that constitutes the unique flavor that readers know “this article is his” when they click on it. And AI, precisely has the ability to help you put this unique flavor, fixed, mass production.

I get asked all the time: why is it that I use the same AI writing tools to produce something that just doesn't have any flavor and reads like an instruction manual, while some people can produce content with a twist, a rhythm, and a personal touch? The core difference lies in whether or not you have given the AI a clear set of instructions about “you”.

This instruction set is not a simple “mimic XX style”, it is a systematic style modeling. Directly push you to the code and algorithms may be intimidating, let's talk about the idea first. Its core logic is to extract the language habits, sentence structure, vocabulary preferences, and even emotional rhythms that make up your style from your past articles into a set of rules and frameworks that can be understood by computers. Then, let the new AI writing, under this set of rules framework.

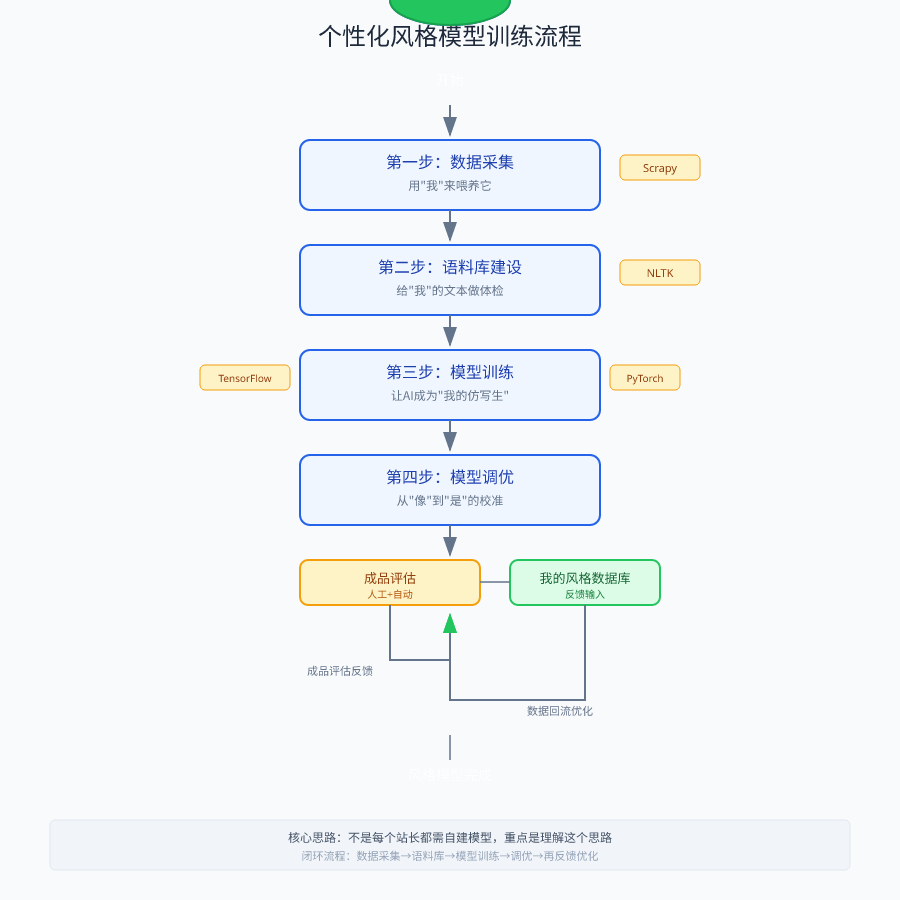

How exactly do you do it? A four-step process that I'll distill for you.

Step 1: Data collection - feeding it with “me”

Your history articles are the best training food. The key here is “comprehensive” and “clean”.

- comprehensively: Gather all your publicly posted articles (provided they are high quality content that truly represents your style) on public numbers, blogs, and Zhihu. If the content is scattered across various platforms, use a simple crawler tool (e.g. Python's Scrapy) to systematically crawl it. Don't work manually, it's too tiring.

- porridgeRemove articles that are reprinted, co-written, or generated by AI just to test the tool. Just keep the “pure” you.

The core mindset of this session is that you are not making up the word count, but rather building a high-purity “I” corpus. The quality of this step directly determines the flavor of the final modeled text.

Step 2: Corpus building - physical examination of “my” text

After getting the raw text, we have to structure it so that the machine can read it. This is not simple categorization, but deep profiling of the text with Natural Language Processing (NLP) tools such as the NLTK library.

- Identify your high-frequency words and exclusive vocabulary: What kind of conjunctions are you used to? “And” or “yet”? What adjectives are preferred? “Stunning” or “surprising”? Do you have any “hackneyed” or technical terms that you've created for yourself?

- Analyze the rhythm of your sentences: How long are your sentences on average? Do you like long sentences spread out, or short, bright sentences? Is there more juxtaposition and prose, or more compound transitions?

- Capturing the structure of your paragraphs: How does it start, transition, and end? Is it customary to tell the story first, or to throw in ideas?

- Sense your emotional tone: Is the whole on the cool side, the aggressive side, the humor side, or the calm side?

The process is to “vectorize” your text, turning sensual style into a set of quantifiable data labels. This process, like top creator Packy McCormick feeding his collaborative AI “editor-in-chief” the author's style guide he admires, is essentially allowing the AI to understand what the criteria for “good” are.

Step 3: Model Training - Let the AI be “My Imitation of a Writer”

With a structured corpus, we can train a base model using a deep learning framework (e.g. TensorFlow/PyTorch). The technical details may sound complicated, but you can roughly understand that we are asking the AI to play a game of “fill in the blanks”, except that the contents of the blanks follow the probability distribution embodied in your past articles.

The goal of our training is to input a topic or a beginning, and the model can generate the words or sentences that are most likely to be written by “you” next, based on the statistical patterns of your language style.

To be clear, we don't usually train a large model from scratch (the computational cost and data size requirements are too high). It is more realistic to pick a basic open source model (e.g., a pre-trained model of a suitable size) as a “seed” and then “fine-tune” it with your corpus. It's like a student with a good liberal arts background coming to you to learn your exclusive techniques.

Step 4: Model tuning - calibrating from “like” to “is”

It is not enough for something to “look like you”, we need it to “be you” and “be better”. This step requires a combination of manual and automated evaluation.

- Manual feedback: Take the multiple copies of the modeled content and show them to yourself, marking passages that “look too much like a rehash of an old text,” places where “the style is off,” and places where “it feels right, but the logic is stuck. ”. Feed this feedback back to the model as “reinforcement signals”.

- Automated assessment: Use algorithmic metrics (e.g., Perplexity) to help determine whether the generated text is the right “distance” from your corpus in terms of linguistic style. Too close is plagiarism, too far is out of tune.

After a few rounds of tweaking, the model will come closer and closer to the ideal balance between retaining the “fingerprints” of your language and being able to express it in creative combinations around new themes.

See here, you may think: I do WordPress, not an algorithmic engineer, this is Scrapy and TensorFlow, I can not play ah?

That is the core of what I want to emphasize:Not every webmaster needs to deploy this technology stack themselves. The point is to get the idea and ask the AI writing tool you're using to provide you with this “style customization” capability.

That's why things likeDuck & Pear AI WritingSuch a tool deserves attention. Its design concept takes “custom style” as a core function module. It allows you to create a baseline for your writing by uploading your own excellent samples or by carefully setting the “style description” (e.g., whether the tone of the article is rigorous and professional or humorous and friendly, and what kind of sentence structure is preferred, etc.).

🔗 Related resources: Duck & Pear AI Writing

A content creation tool that supports custom article style templates and is dedicated to incorporating personal style into the AI generation process.

You don't have to touch the code, but you do need to clearly tell the tool “what flavor I want”. Essentially, you're building and invoking a “style model” with a more user-friendly interface. This is the key to preventing your site from producing “average-optimal” content with a blurry look and feel.

The training of style models solves the problem of “how to write”. When AI can not only produce content based on specialized knowledge (Chapter 4), but also tell it in your voice (this chapter), the spell of content homogenization is really cracked open. But this is still a tool level of preparation, ultimately determining whether your site is a “smart site with a human face” or a “cold AI conglomerate” is the placement of people and machines in the real workflow that happens every day.

The right posture of human-machine collaboration: AI plays the hand, people get the idea

Earlier we talked about three differentiation directions: vertical knowledge integration, professional depth barriers, personal style model. But in the end, these are just “ready”, the real decision of your site is “human flavor of the intelligent site” or “cold AI collection”, is what really happens every day! How people and machines are positioned in the workflow.

Let's take a look at a case study I particularly admire from top creator Packy McCormick, whose newsletter “Not Boring” is quite well known in the tech world for its unique style and strong point of view. How does he use AI, the core idea of one sentence:Take AI from “writer” to “editor-in-chief.”。

He used Claude's Projects feature to build an editorial knowledge base, which he uploaded with style guide documents for tech writers he admired. Then sent detailed instructions to the AI to take on the role of a world-class editor: critically reviewing and suggesting improvements to the article's writing style, structure, logical strength of the argument, data support, and novelty of the ideas, while maintaining his original voice and style.

This is an important shift in thinking: AI is not the one who writes for you, but the one who picks your brain. You can think of it as a “second pair of eyes” with unlimited energy and a broad vision, but no emotions. After you write the first draft, it will do proofreading and improve the suggestions. This is the correct posture of human-machine collaboration.

In practice, there are a few specific pitfalls you have to keep an eye on personally when integrating AI into WordPress workflows, and AI can't be counted on.

Information verification, must be checked by handThe information provided by ChatGPT may be outdated or even wrong. It was trained using resources up to 2021 and is largely blind to what happens after that. Worse, it's trained to be “neutral and unbiased,” so if you ask it to write a strong opinion piece, it often writes you a solid instruction manual. I once asked the AI to write an analysis of an emerging technology, and it gave me a list of pros and cons, and concluded that “there are pros and cons” - which is the same as saying nothing. You have to take responsibility for the facts in your article, look up the information, look for the data.

Don't leave it all to AI.AI-generated images always look a bit “AI-flavored”, too perfect, too smooth, lacking realism. Nowadays, copyrighted images API is very mature, using Unsplash and other real photographers' works, the copyright risk is lower, and the readers are more comfortable to look at.

Internal links have to build themselvesThe AI doesn't know which posts on your site are relevant to the topic at hand, and it can't automatically create connections between content for you. Internal linking is precisely the key action for WordPress sites to improve SEO and user dwell time. You have to think for yourself: which old posts on the site can this new post echo? Then add them manually.

Catalog structure and reading experience to plug inAI is a text generator that doesn't care about typography or how quickly readers can get to the point. You need to manually add a table of contents, FAQ, and bullet point list to the article to make it more structured. These things don't add value, but they can dramatically lower the reader's threshold for reading.

The most important point: injecting personal insights. This is the part that AI will never do well. Lessons learned, potholes stepped on, and unique perspective judgment from the industry are the real things that separate your content from others.AI can give you the skeleton, but you have to fill in the meat yourself.

At the end of the day, the core principle is one sentence:AI is responsible for the skeleton and muscles, humans are responsible for injecting the soul。

What is meant by skeleton? Topic identification, data collection, outline construction, first draft generation, these can be left to AI to complete efficiently. What is meant by muscle? Argument deepening, data validation, case supplementation, detail polishing, these need to be personally hands-on. What is meant by soul? Unique point of view, warm expression, personal attitude throughout the text - sorry, this thing only you can give.

If you find the above operation too trivial and want a ready-made tool that can run this workflow smoothly, I can give you a reference:Duck & Pear AI Writing(https://www.yaliai.com/). Its design is based on the idea of “human-computer collaboration” logic: support for custom article style templates, meaning that you can put your personal style “into” the tool; a key to generate a function to help you quickly out of the first draft; intelligent illustrations and internal link suggestions to help you Completing the details; SEO optimization and data insights function so that the content is not only tasteful, but also hit.

More importantly, it puts the “AI play the hands, people take the idea” concept into the product process - you do not need to build their own complex workflow, the tool itself is guiding you to do what you need to do.

With this synergistic set of ideas in mind, the next question is a realistic one: how do you know you're really doing it right and not just fooling yourself? In the next chapter (Three Tips for Detecting Whether Your Site Is Losing Its Soul), I give you an actionable framework for validation.

Three Tips to Detect if Your Site is Losing Its Soul

In the last chapter, we put AI in the position of “editor-in-chief“ and established the workflow of human-computer collaborative writing. But the method is a method, the effect has to be tested by yourself. How to determine whether your site really has a human face, or just a different posture to continue to produce homogenized garbage? Give you three hard indicators, read it and you can check yourself.

Tip #1: Cover the Logo Test - Does the content have a unique professional experience

Find an article you recently published, cover the site logo and author's name, and then ask yourself: this article on the competitor's website, will not feel out of place? If the answer is “yes,“ your content is just a general-purpose knowledge mover that doesn't incorporate in-depth knowledge of your vertical or inject your personal experience. The real differentiation of the content, readers should know at a glance that “only this station can write it“ - because there are pits you have stepped on, you have verified the data, your unique judgment.

Tip #2: Comment section thermometer - can readers feel the real person

Go through the comments section or private messages of the last five articles. Are readers discussing “that's an interesting point of view, I've had similar experiences“ or are they asking “was this written by AI“? A more direct form of self-checking: Is there a clear message of good or evil in your writing? Do you dare to offend people? AI writing tools can mimic style, but they don't dare to hit hard, and they don't just pop up in an article and say, “I got screwed over by this thing last week for 300 bucks“. If you feel like you're reading an instruction manual when you read your own writing, you've lost your soul.

Tip #3: Update Pacing Review - Is Frequency Out of Balance with Quality?

Open your content calendar and check your posting history for the past month. If, in the blind pursuit of daily updates, you're publishing AI-generated content without going through the “manual soul injection“ process described in Chapter 6 - without checking information, adding personal insights, or adjusting directory structure - you're using tactical diligence to mask strategic laziness. -Then you are using tactical diligence to cover up strategic laziness. High-quality human-computer collaborative writing is better to have one piece of meat and bones in three days than three pieces of correct nonsense in one day.

- Perform Logo Masking Test: Randomly select a recent article, mask the site logo and author information, and determine if the content is published on a competitor's site without any sense of contradiction.

- Check for an infusion of professional experience: verify that the article contains at least one unique judgment based on personal pothole experience, field-verified data, or in-depth knowledge of the vertical

- Look through the comments section thermometer: check the reader feedback on the last five posts to make sure the discussion is focused on empathy and sharing of experiences rather than questioning whether it's AI-generated or not

- Validating personified expressions: confirming that the article has a clear stance of good or bad, details of the industry known only to those who have experienced it, or a strong point of view that dares to offend a specific group of people

- Reviewing the publishing calendar: pulling the last 30 days of publishing records and flagging AI-generated content that has been published directly, skipping the “artificial soul“ process, in the pursuit of daily updates.

- Check the quality-frequency balance: confirm that the current pace of updates allows for in-depth content (rather than three days of a piece with bones and meat), rather than mechanical daily updates at the expense of quality

- Confirm the role of AI: check whether AI in the workflow is strictly limited to “miscellaneous“ (handling the skeleton) and whether humans have the final say in “injecting the soul“ (opinions and attitudes).

If you find a problem with these three measures, don't panic, go back to the workflow in Chapter 6 and readjust. Reduce the frequency of publishing, reposition the AI as a “handyman“ rather than a “ghostwriter“, and force yourself to add a piece of cold knowledge or a distinctive point of view that only you know in each article. Remember, AI writing tools are just tools, personalized style models are just aids, and the real soul is always in your hands - in those moments when you're willing to dig a little deeper and speak a little more truth for your readers.